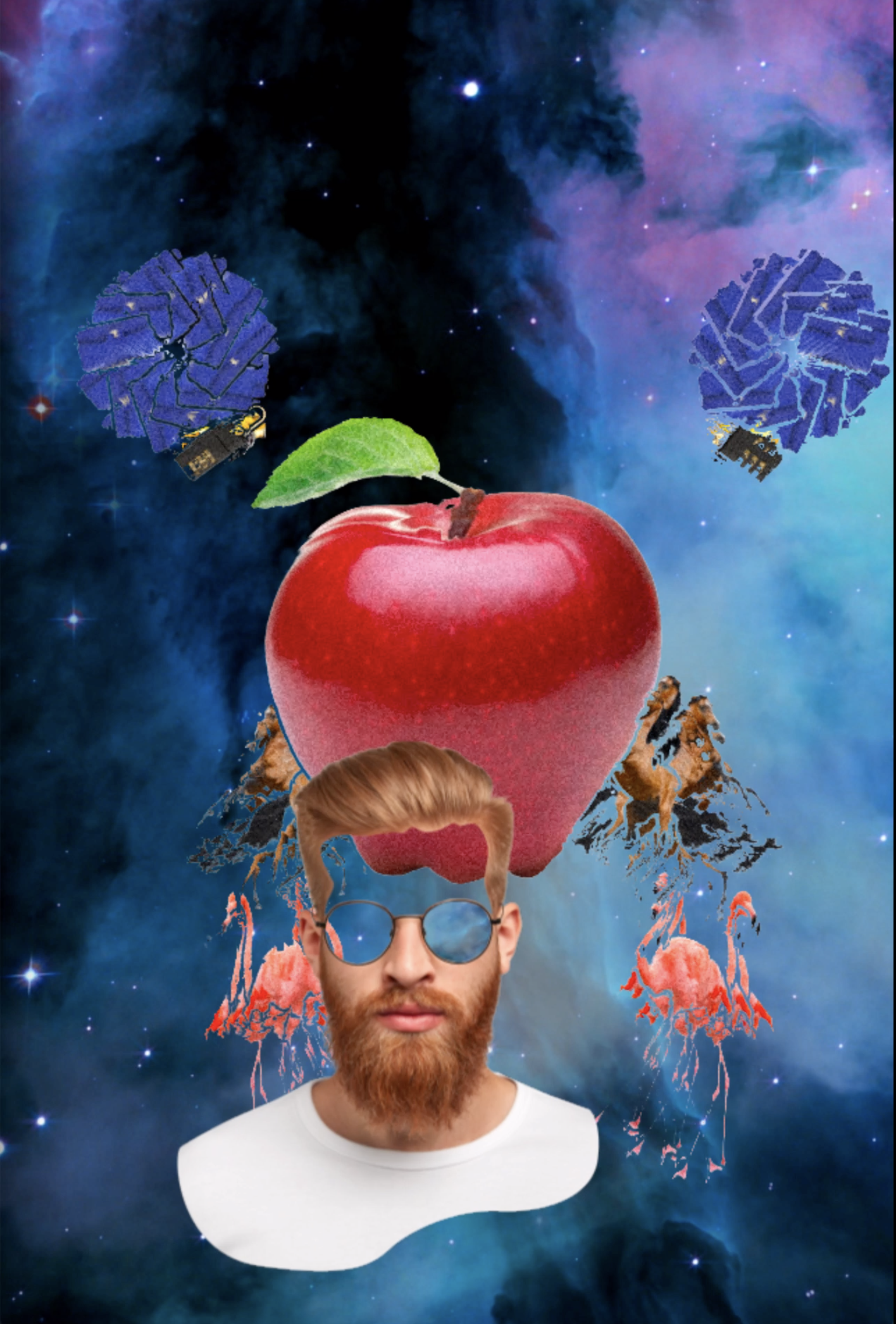

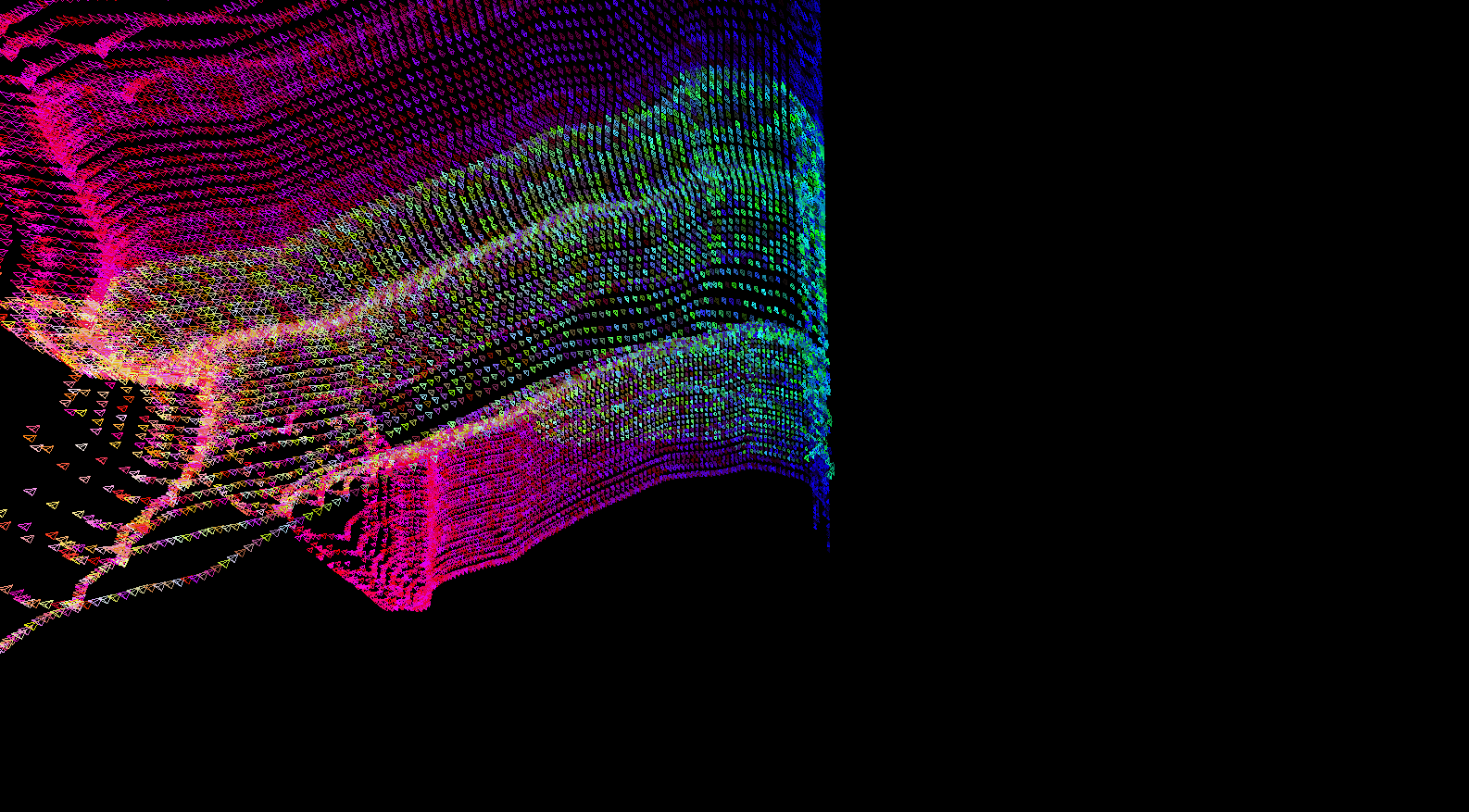

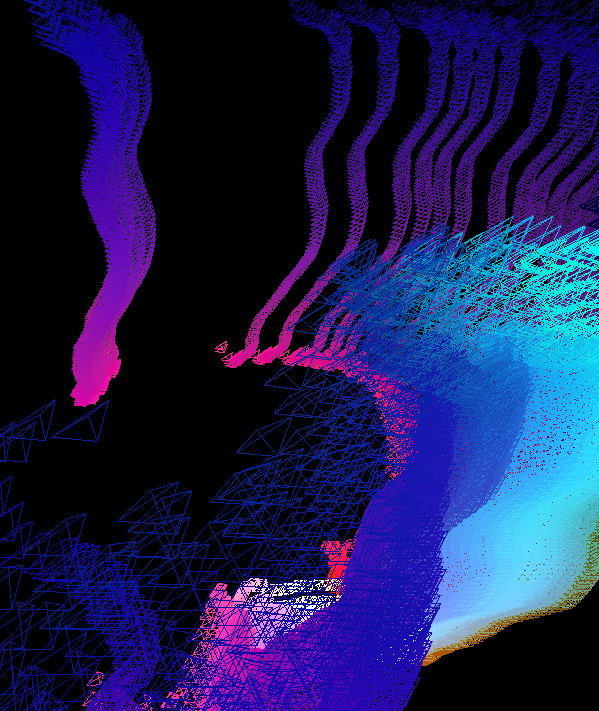

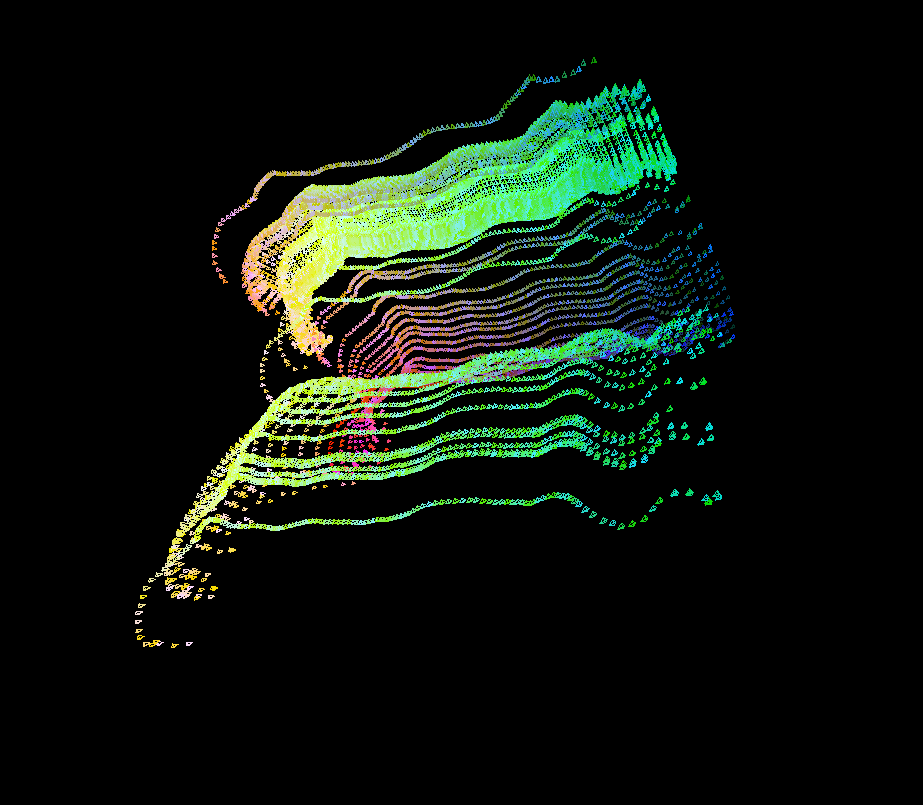

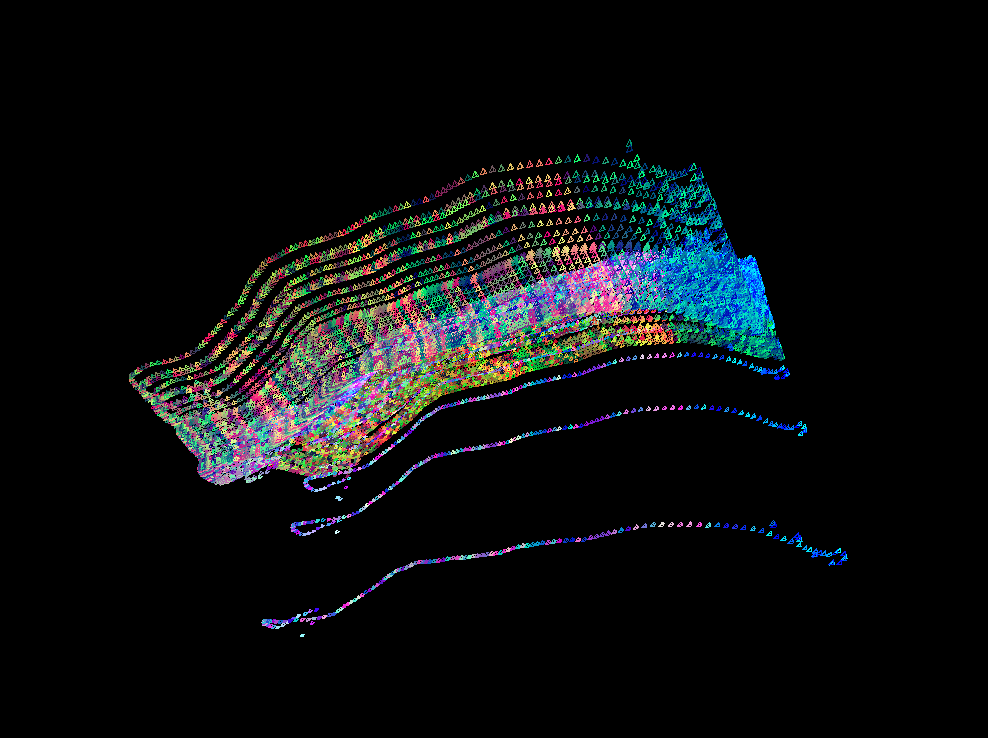

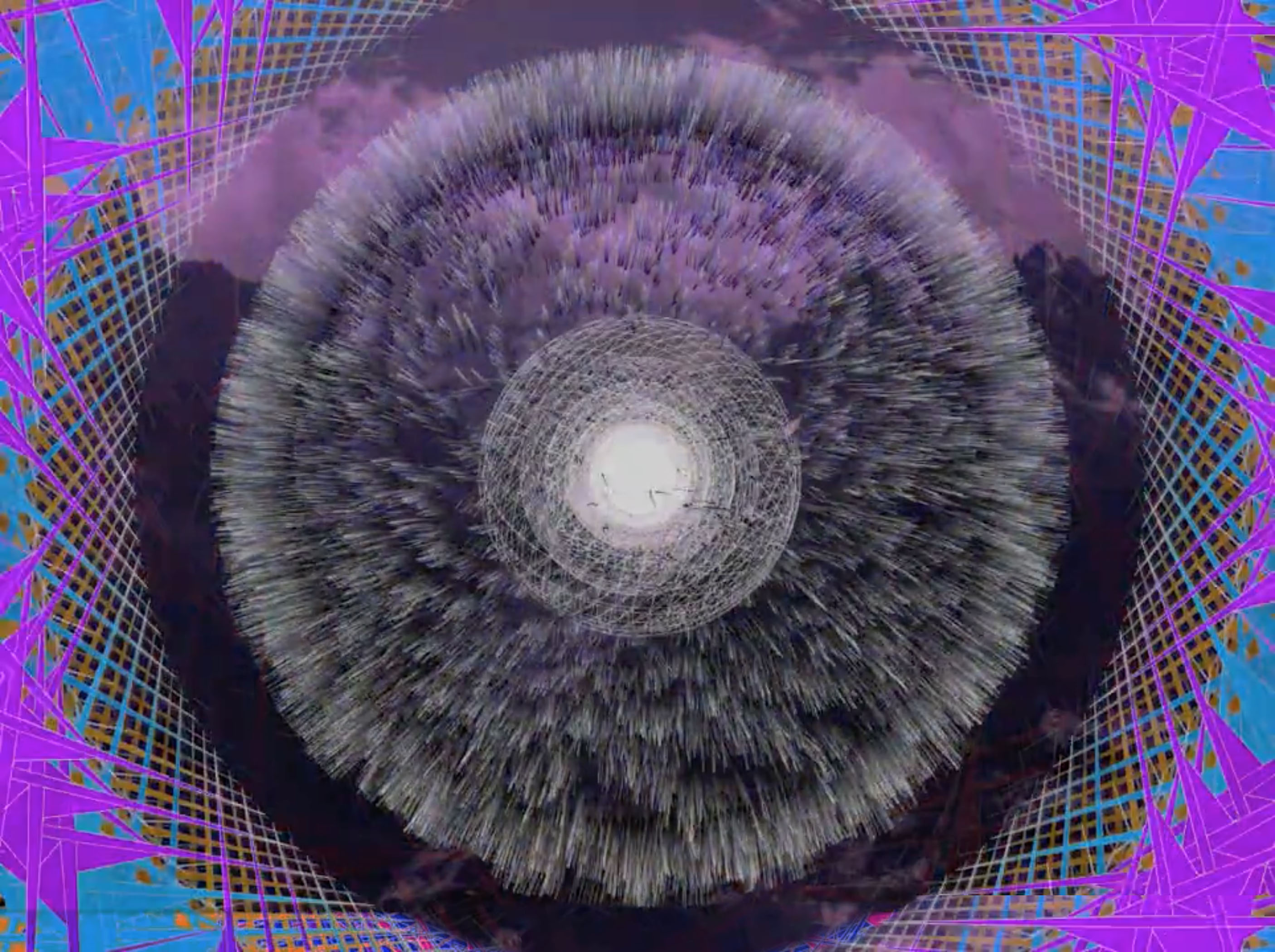

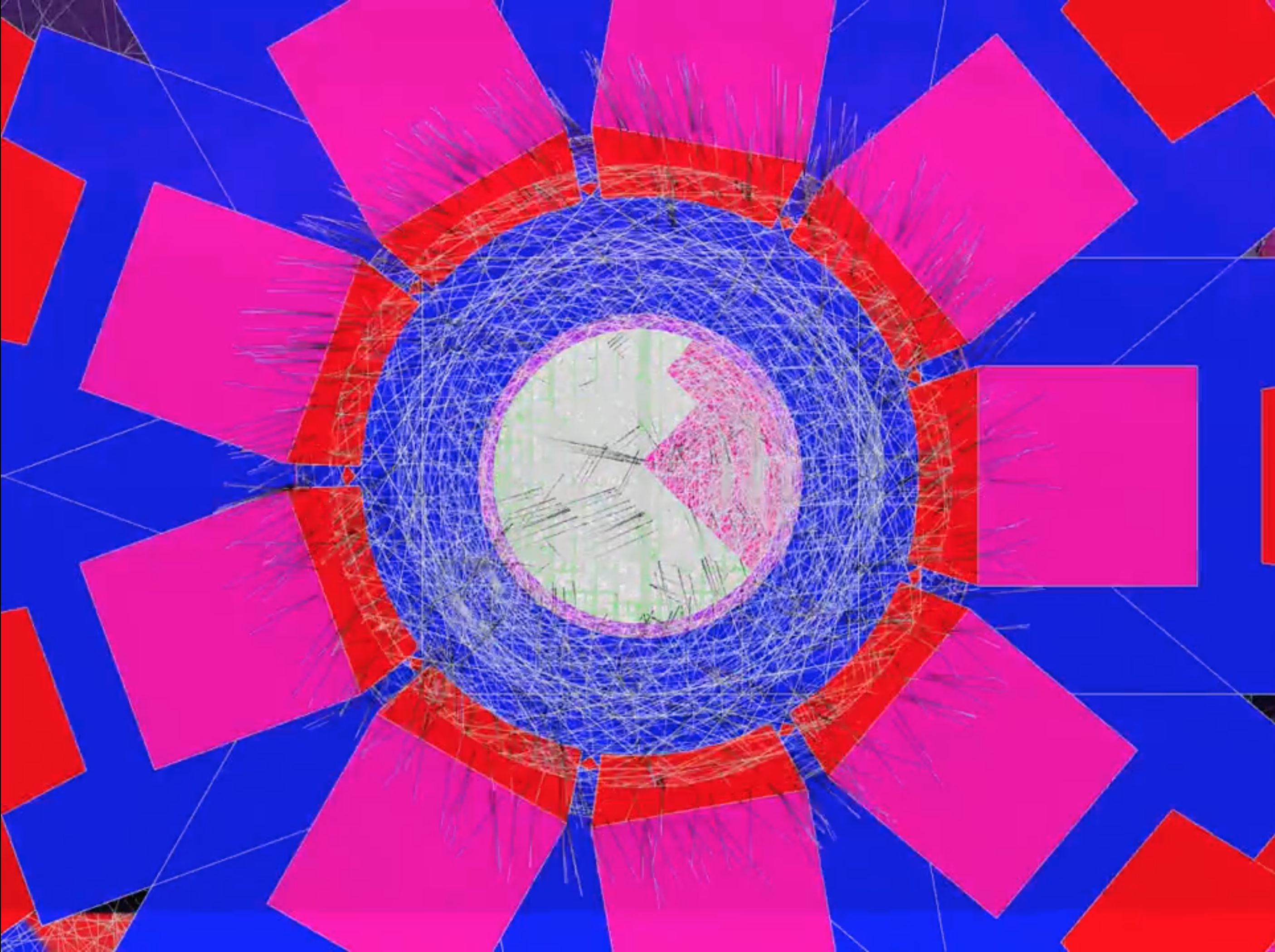

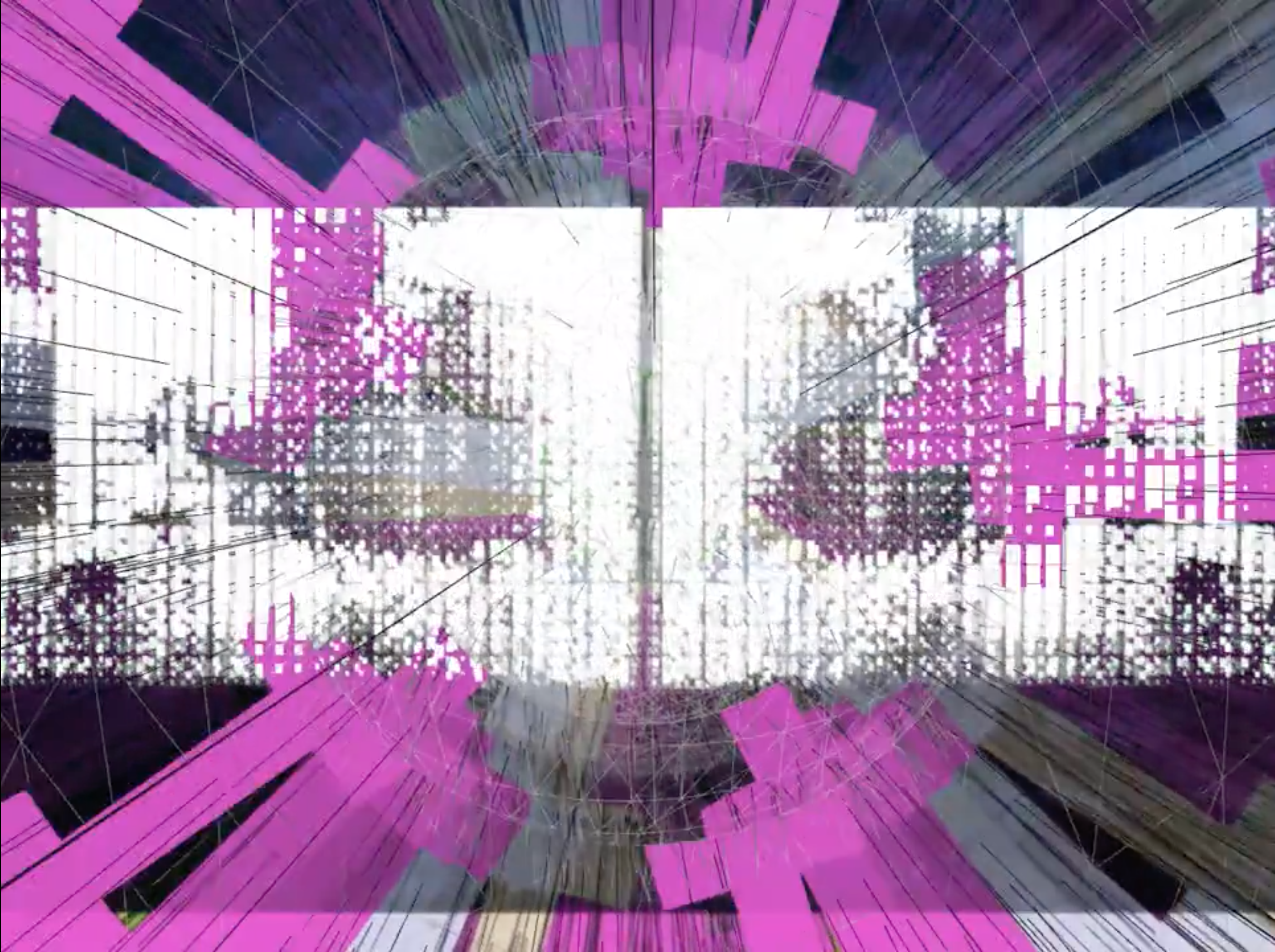

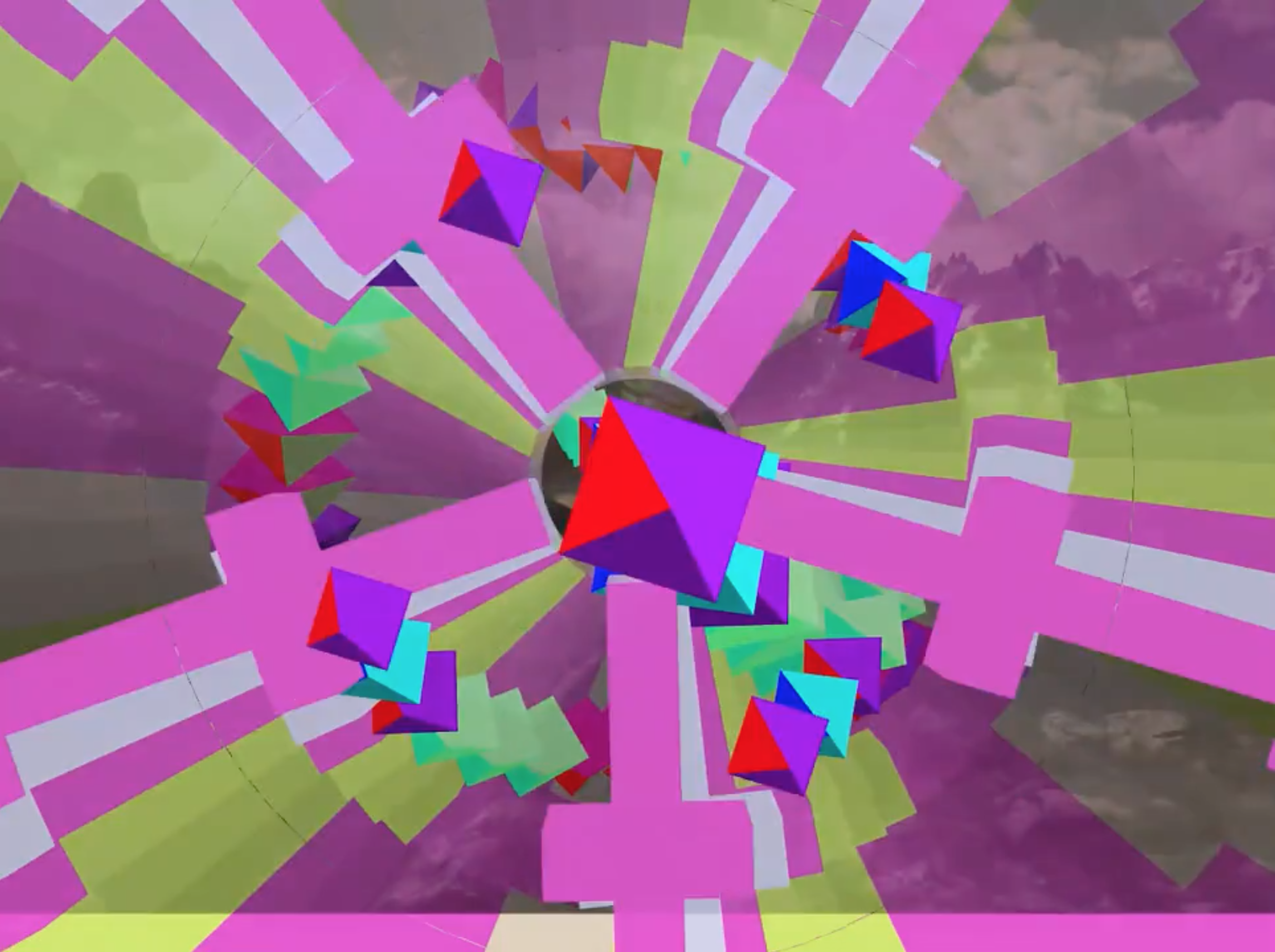

generative collage for ShutterStock

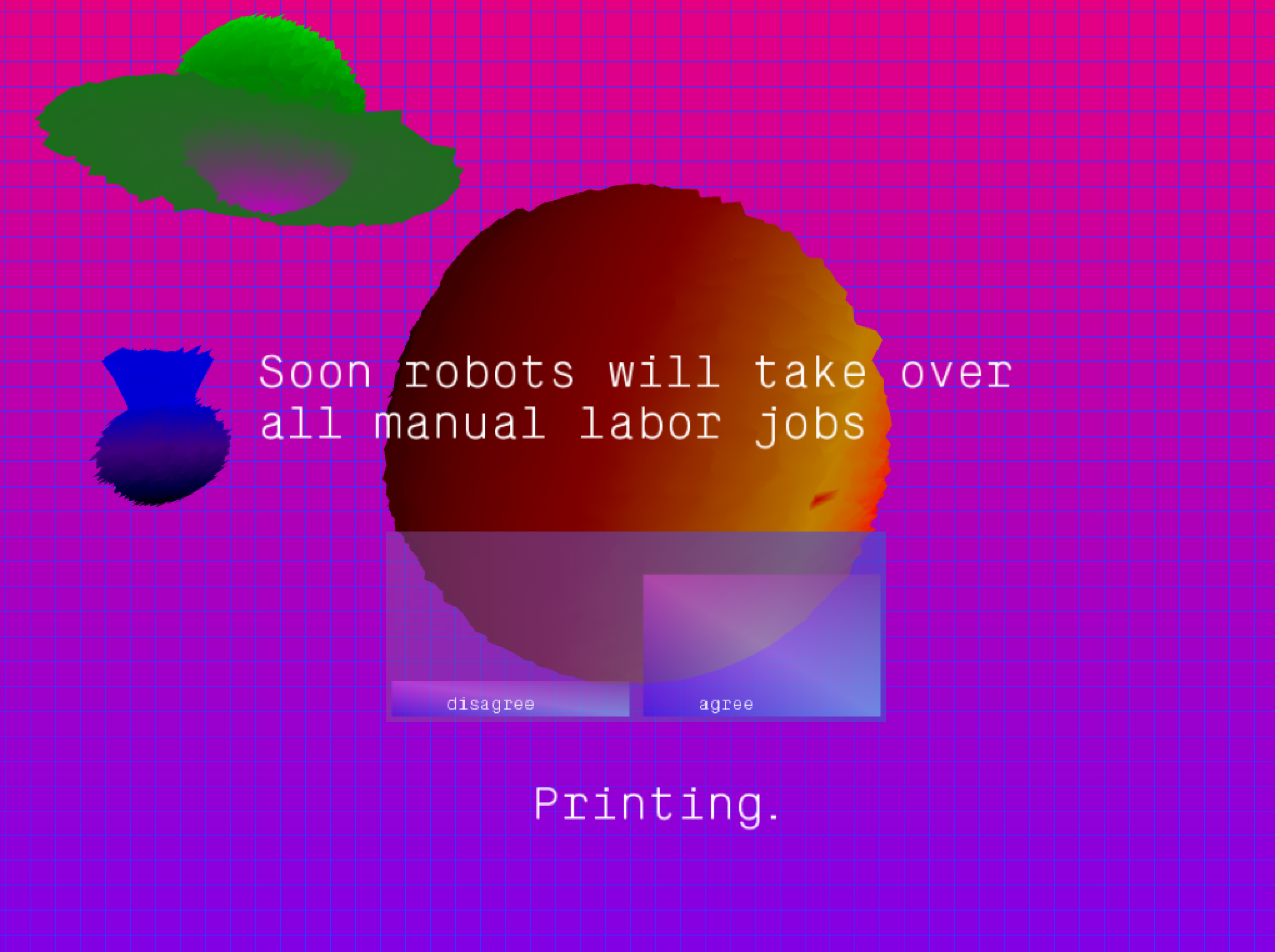

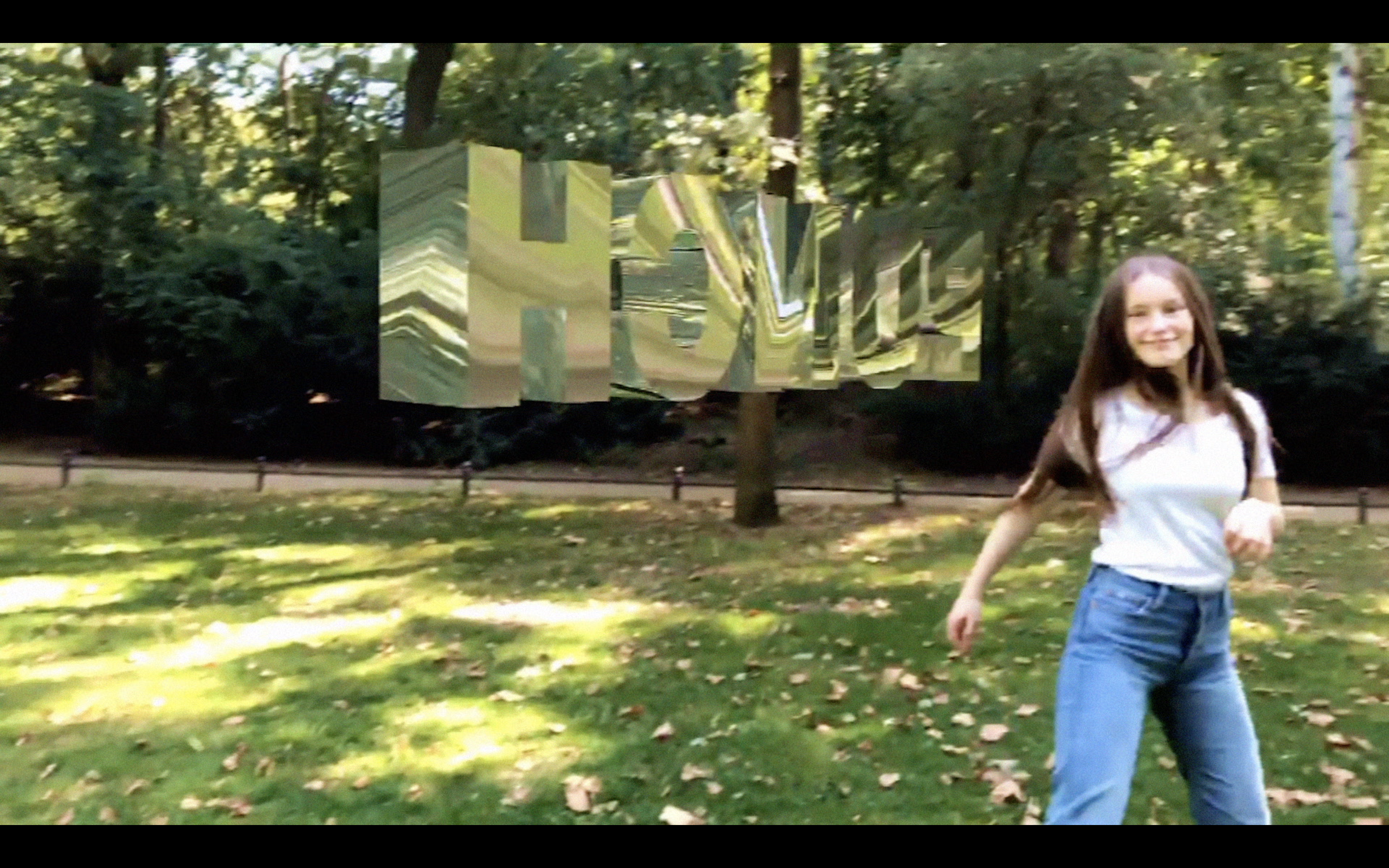

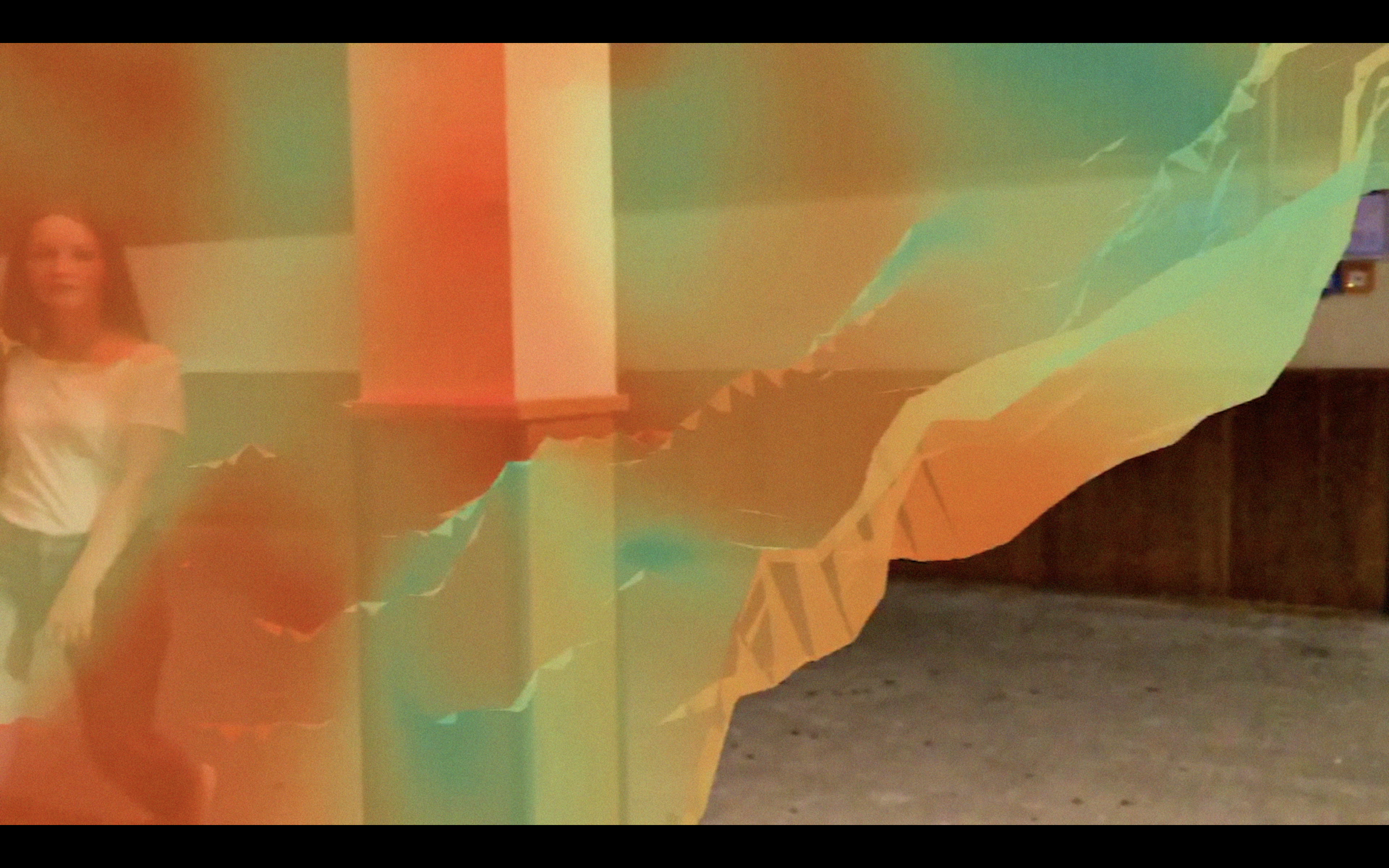

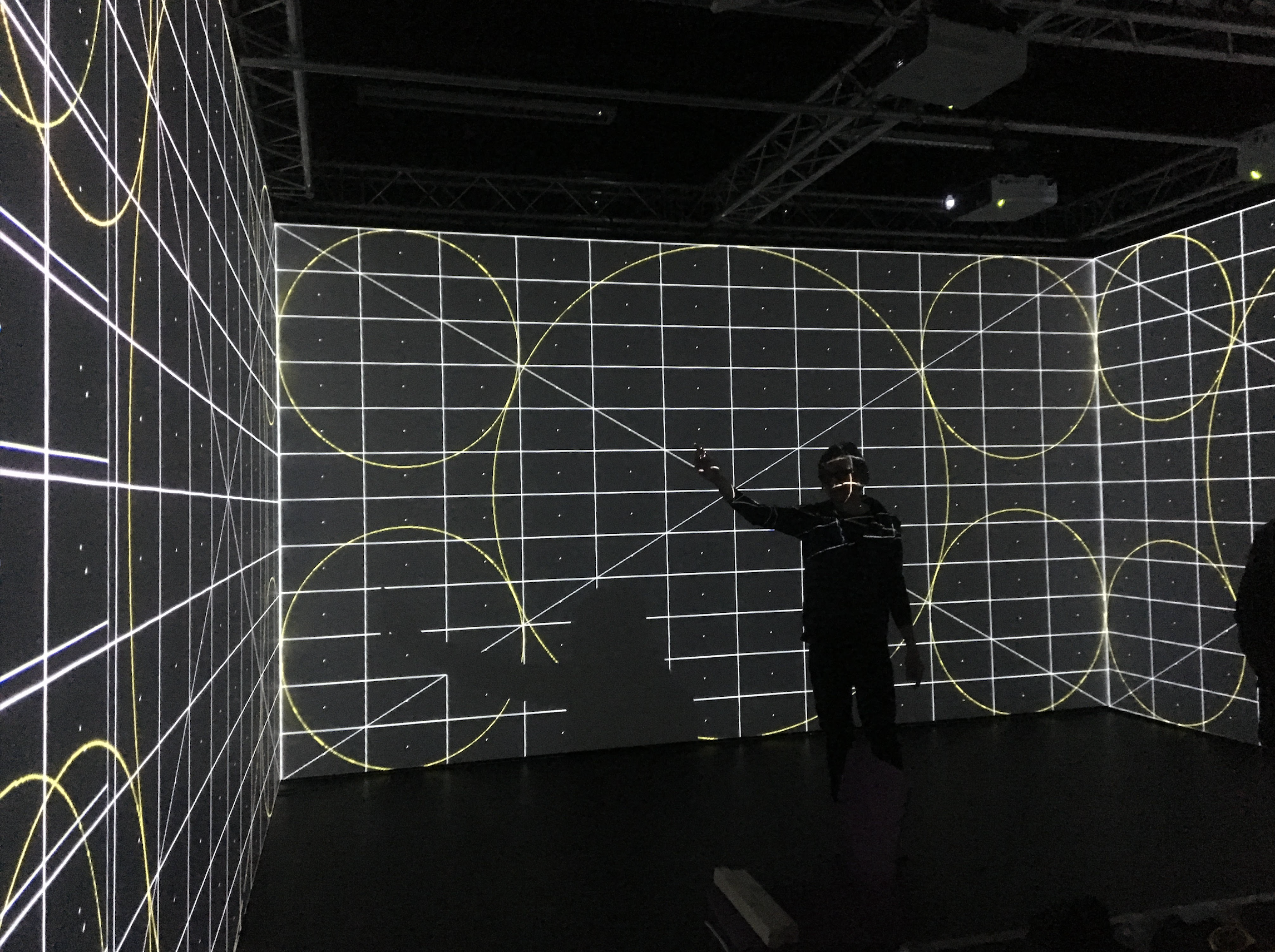

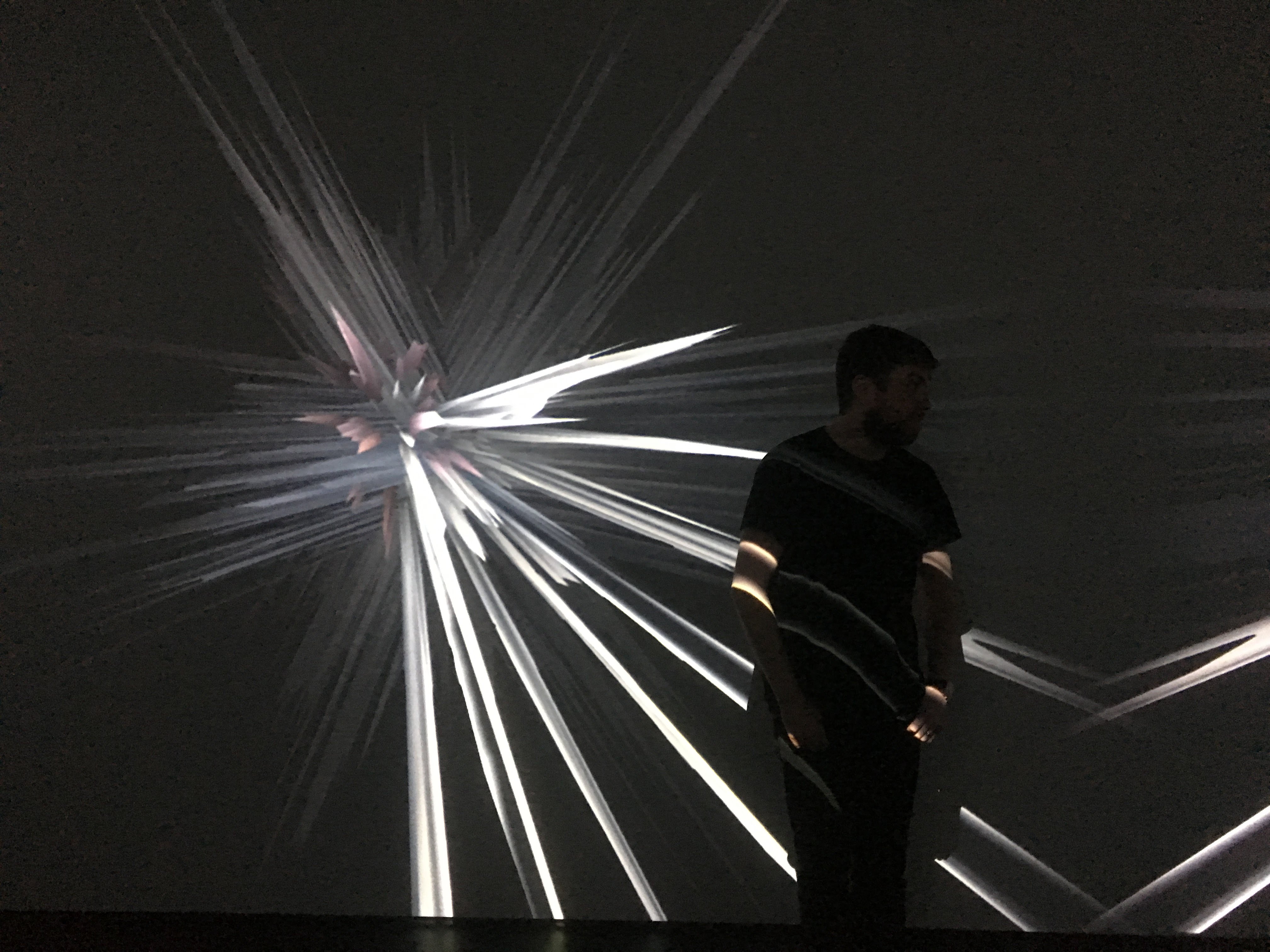

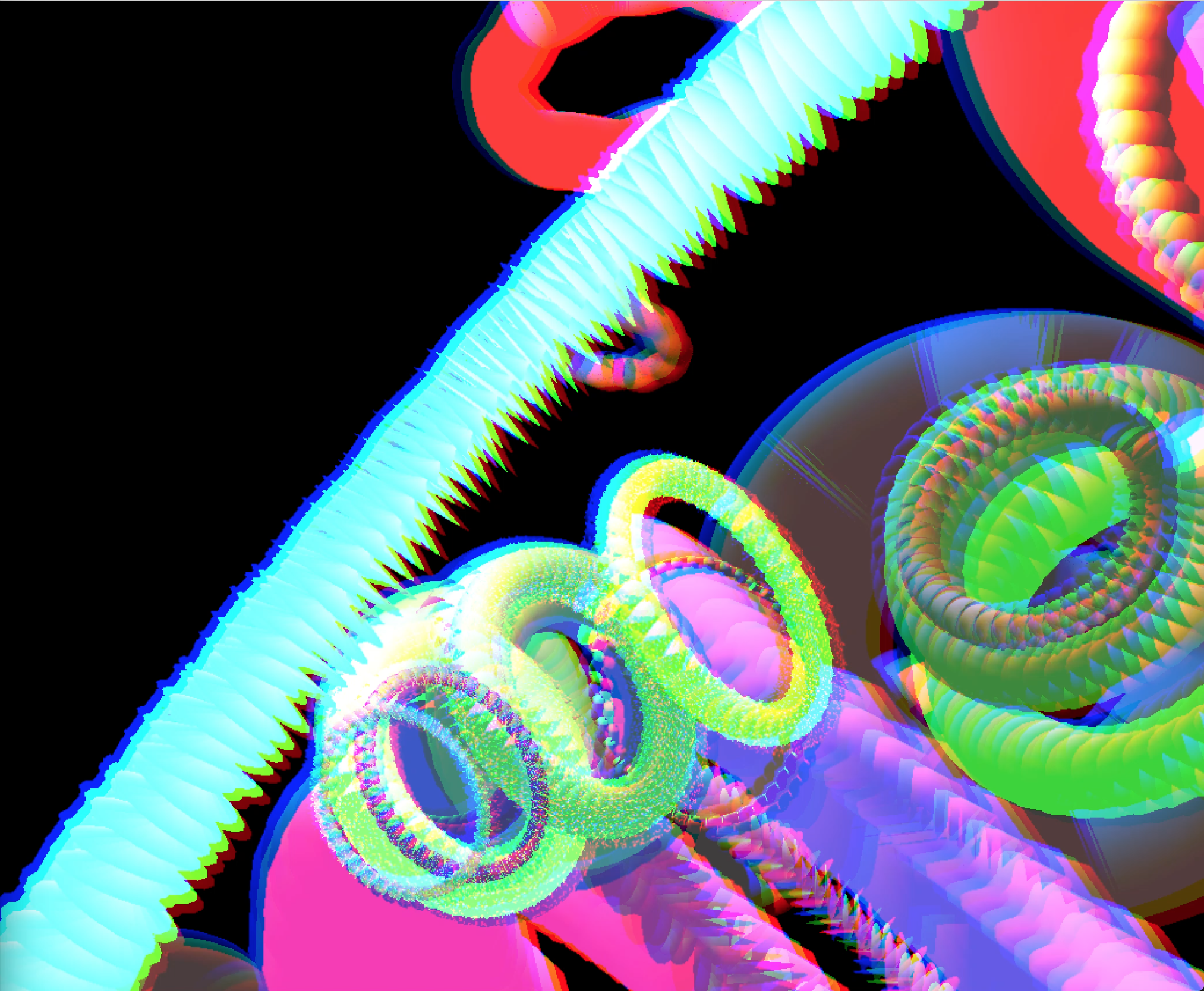

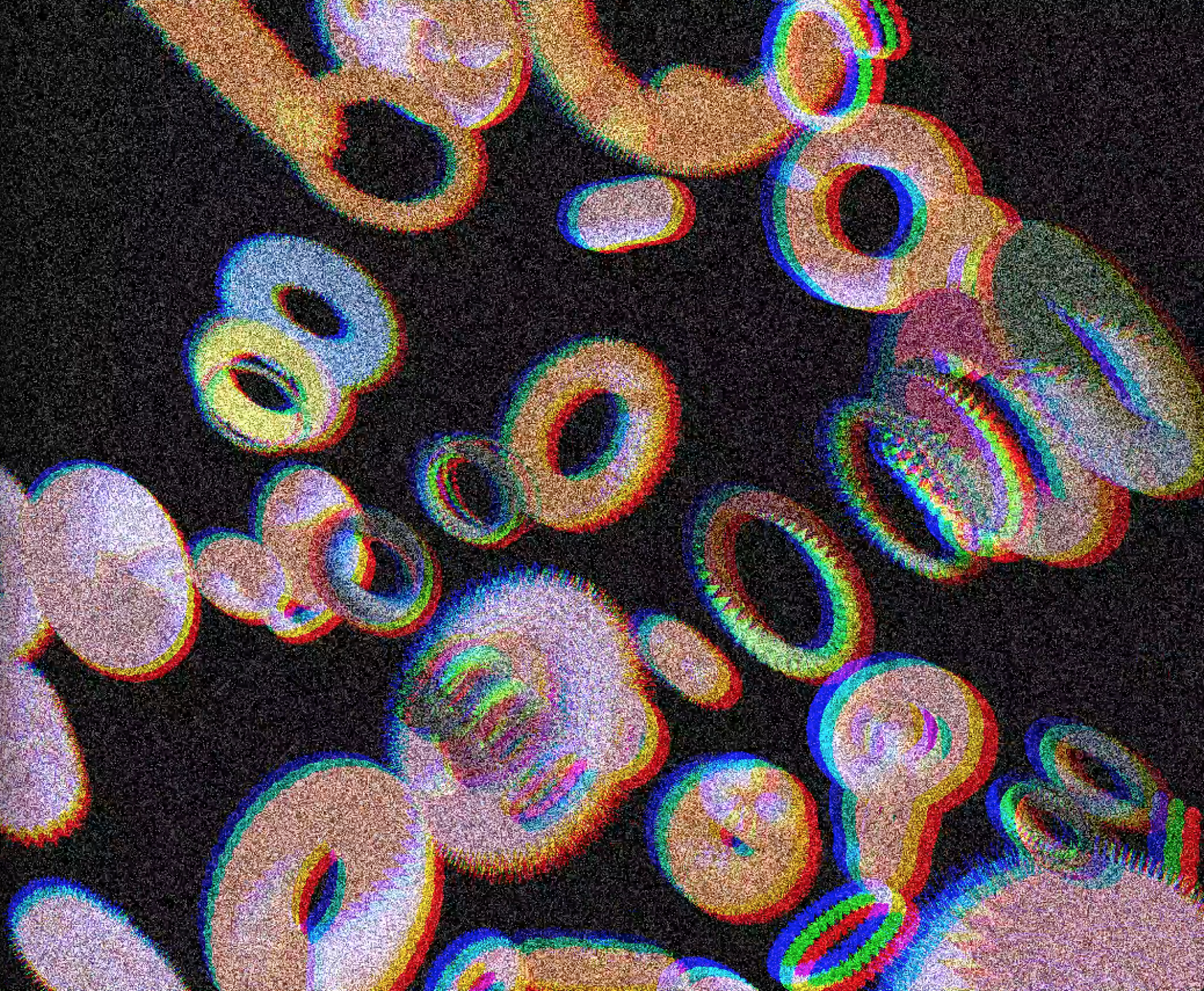

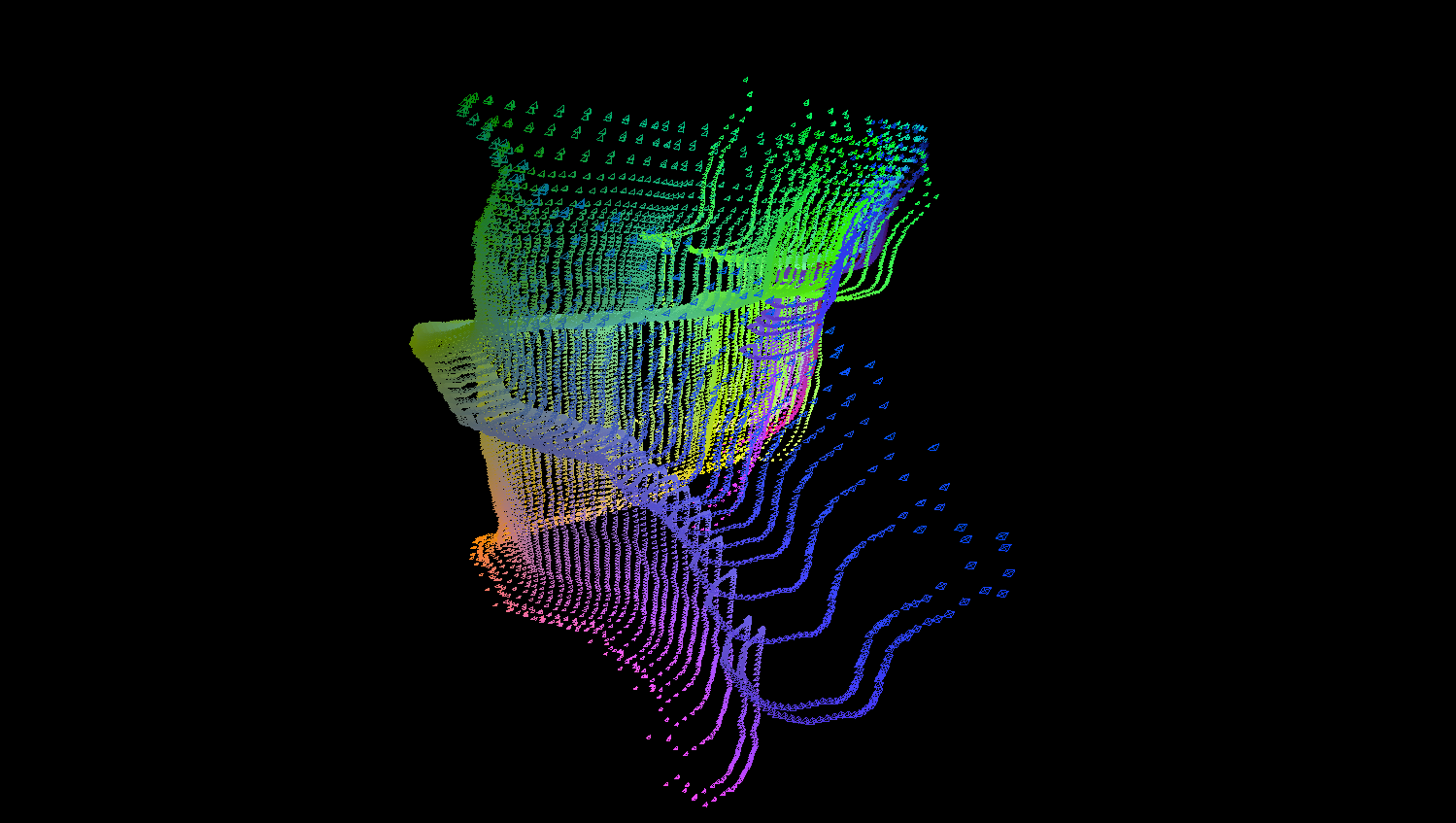

As a member of Masino Bay , we designed an installation for the D&AD festival 2019 , which is a generative collage, learning whicxh images people like depending on the interest they give the piece.

The project was sponsored by ShutterStock , and GreatCoat Film .

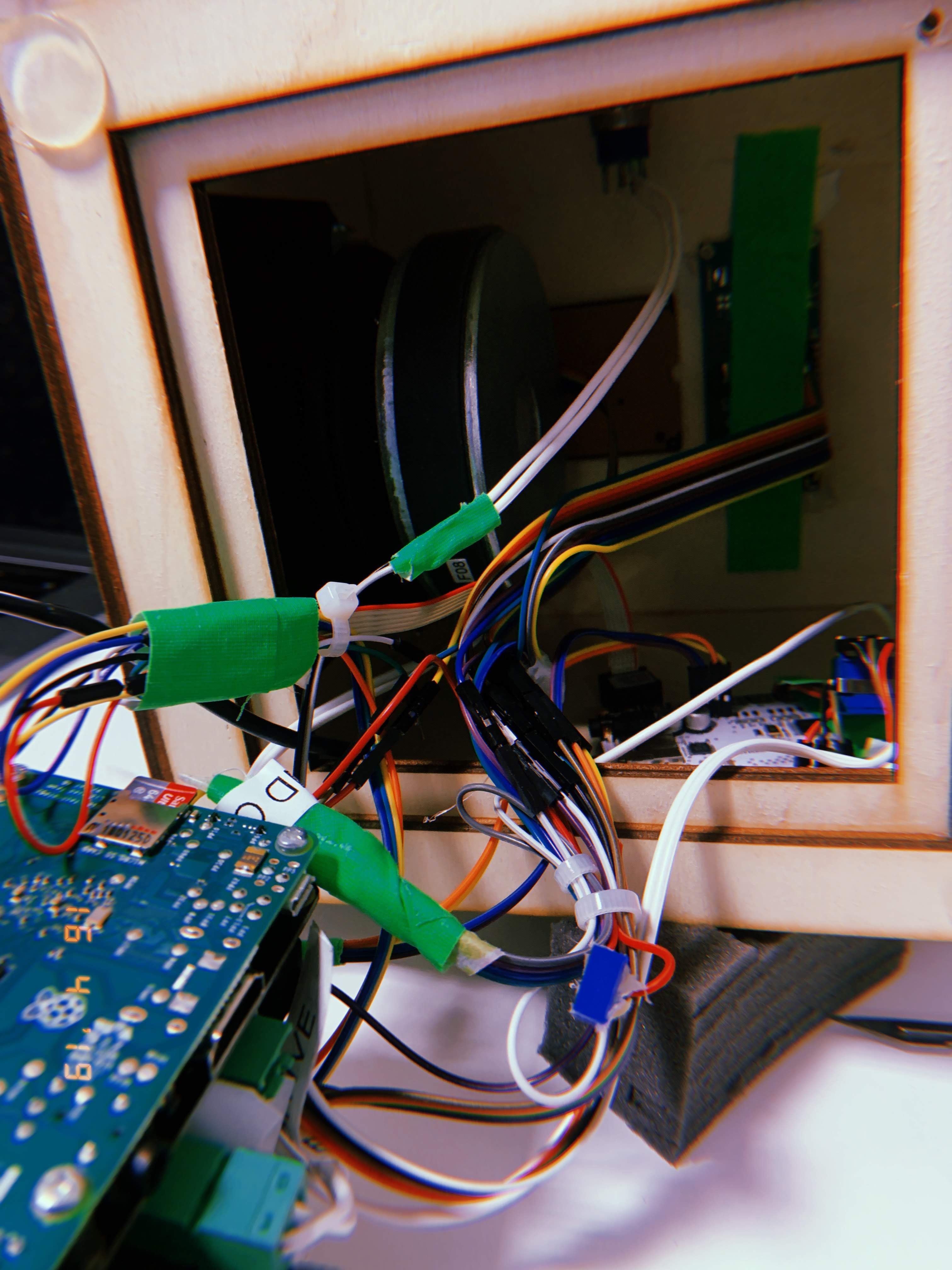

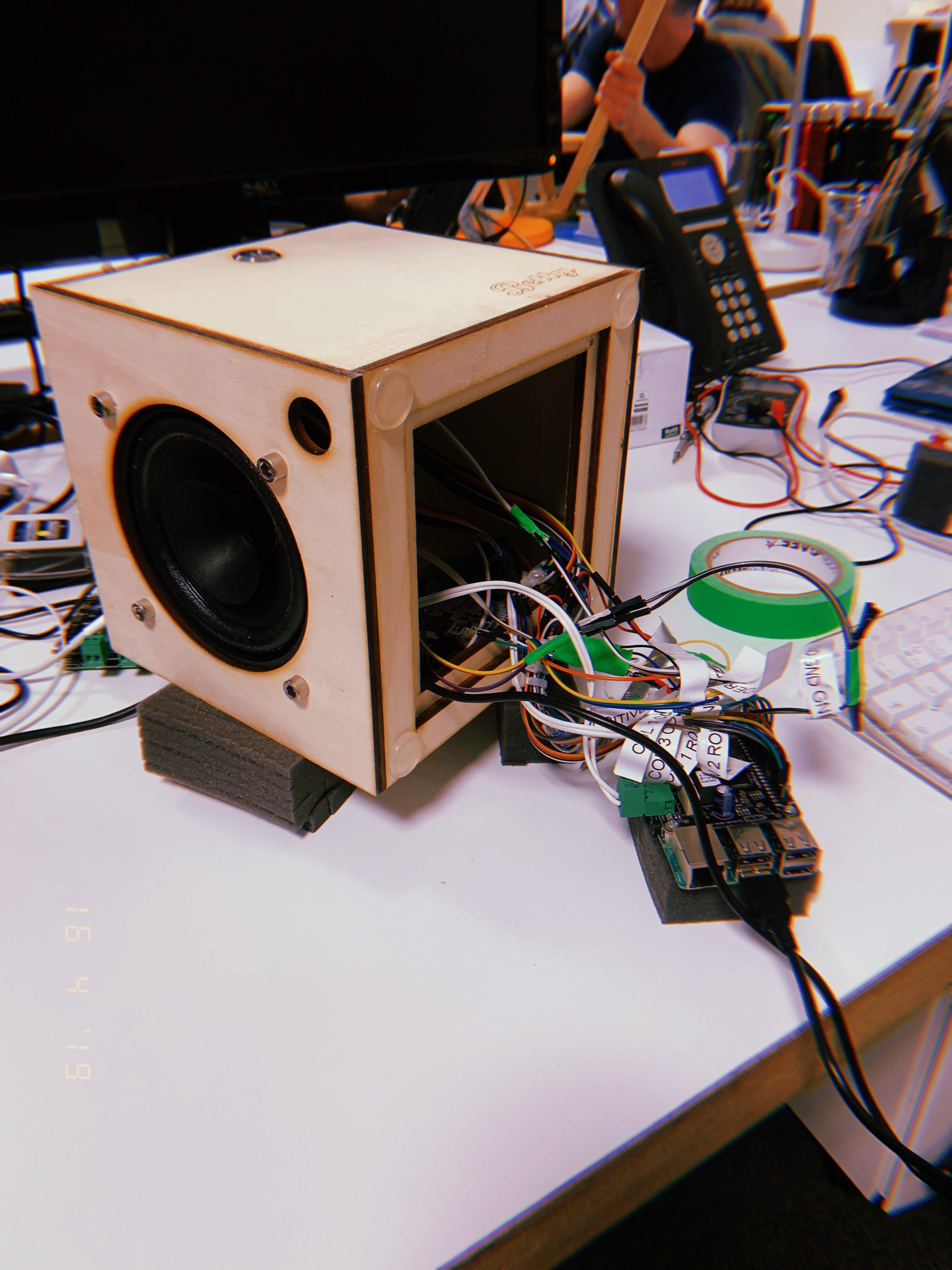

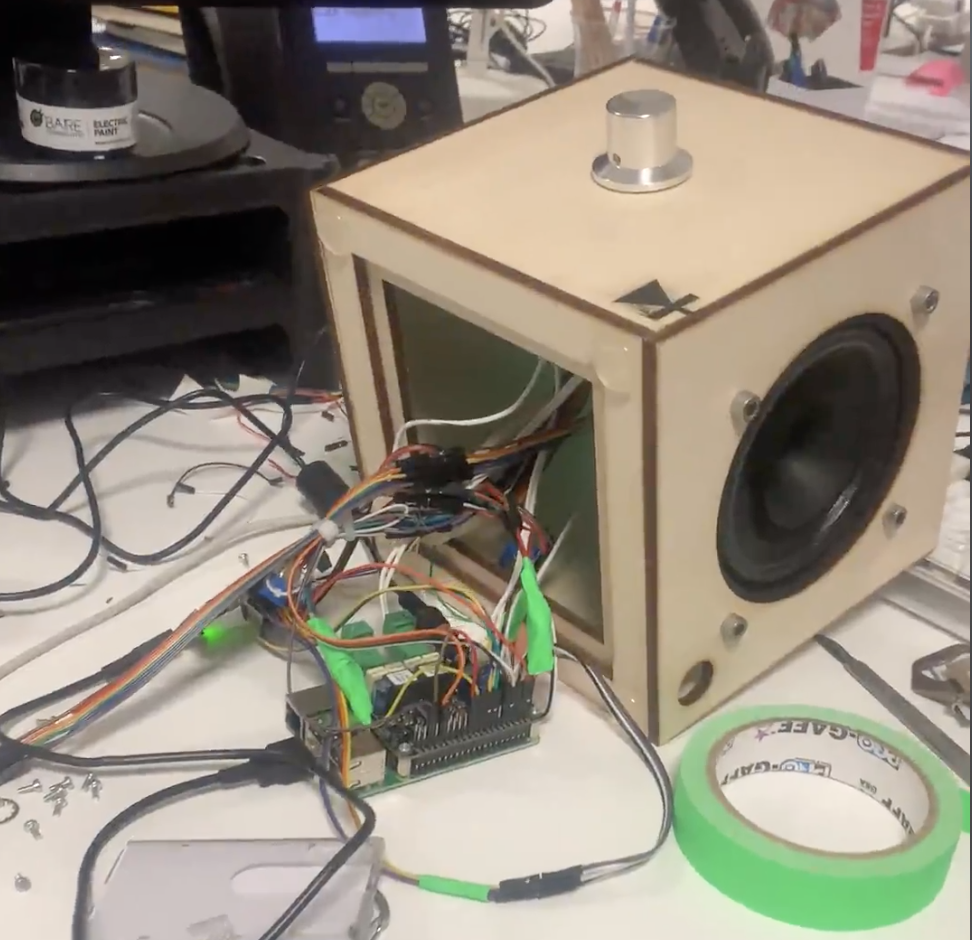

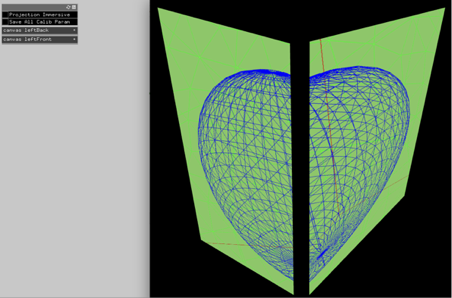

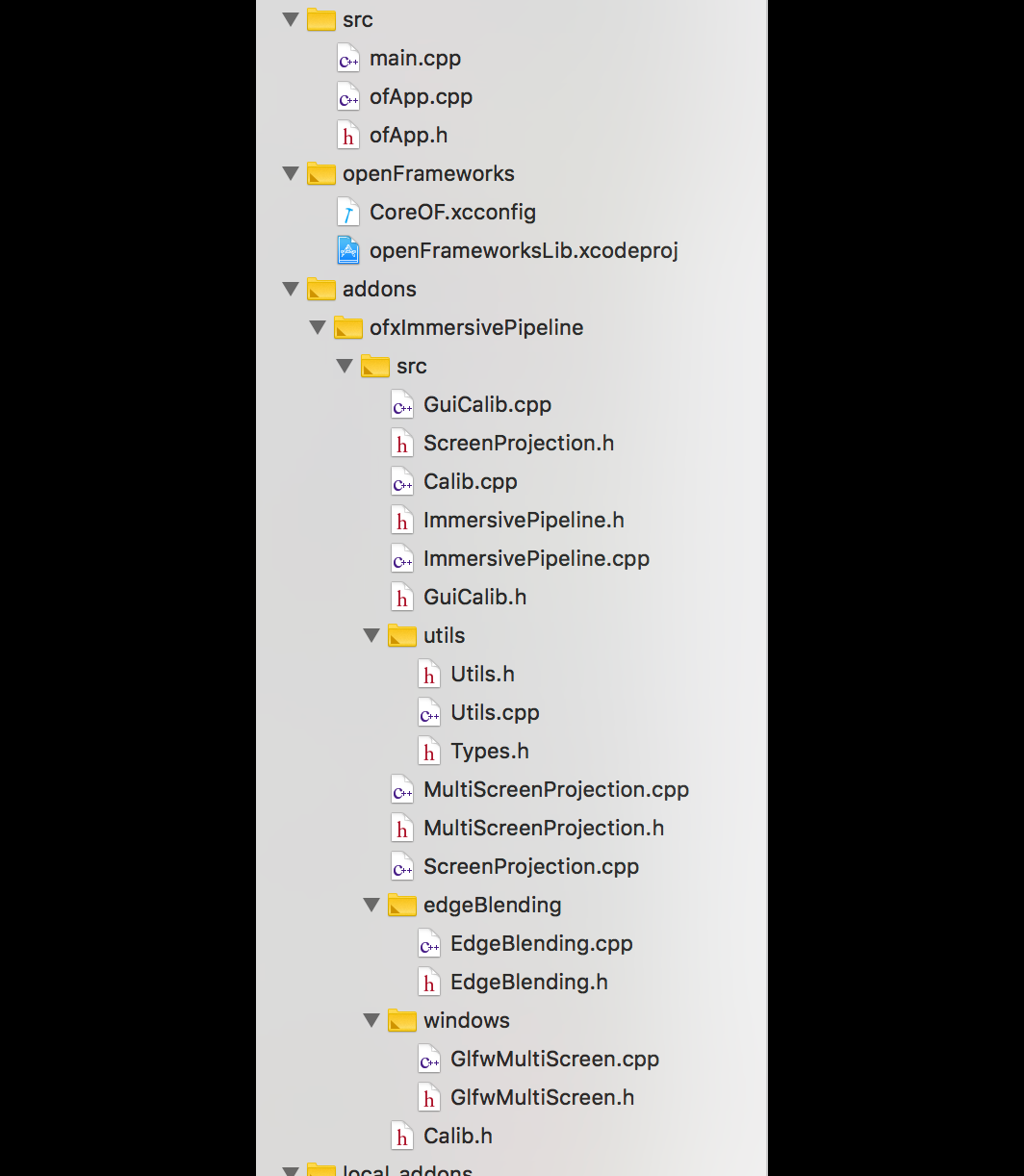

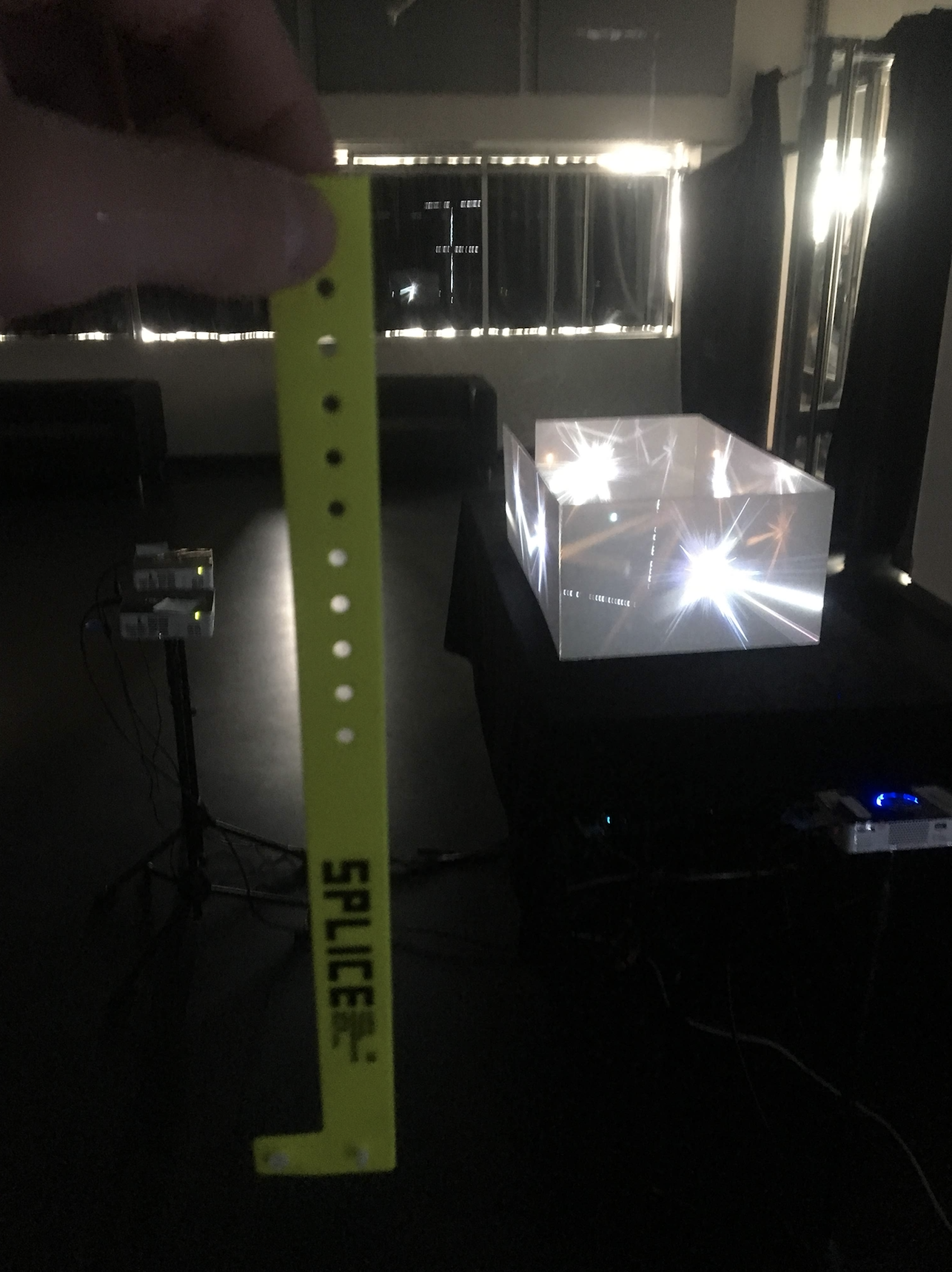

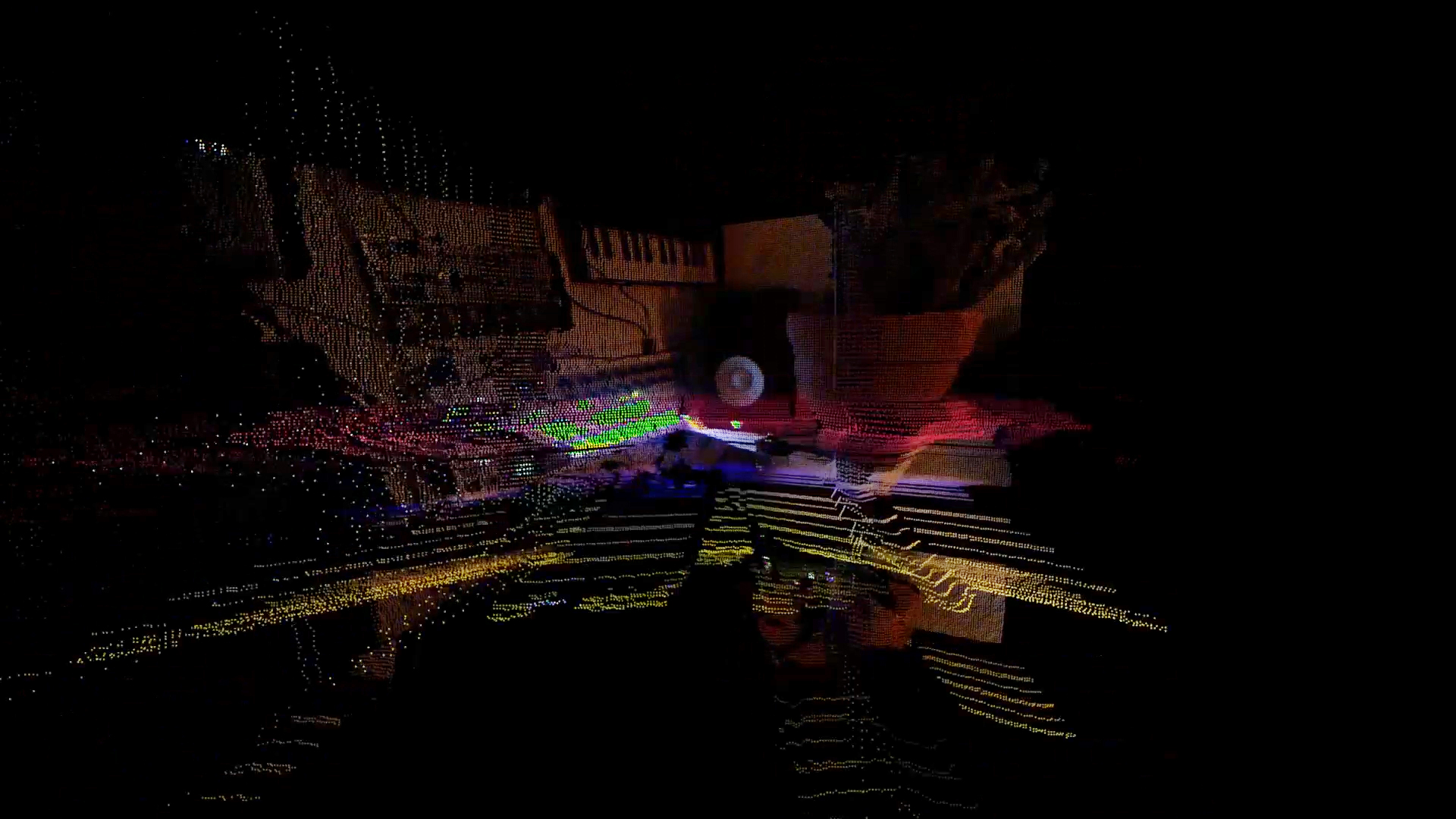

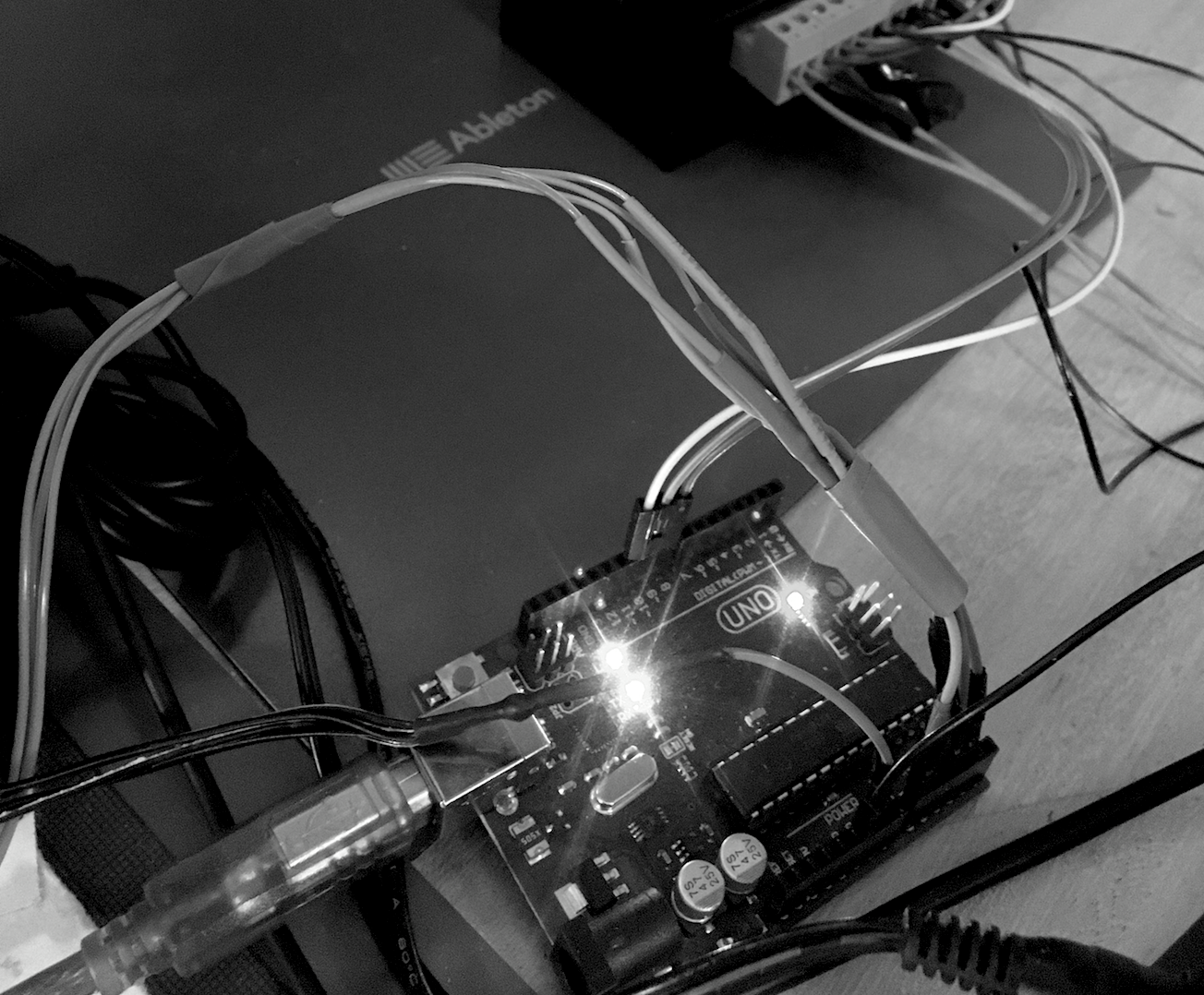

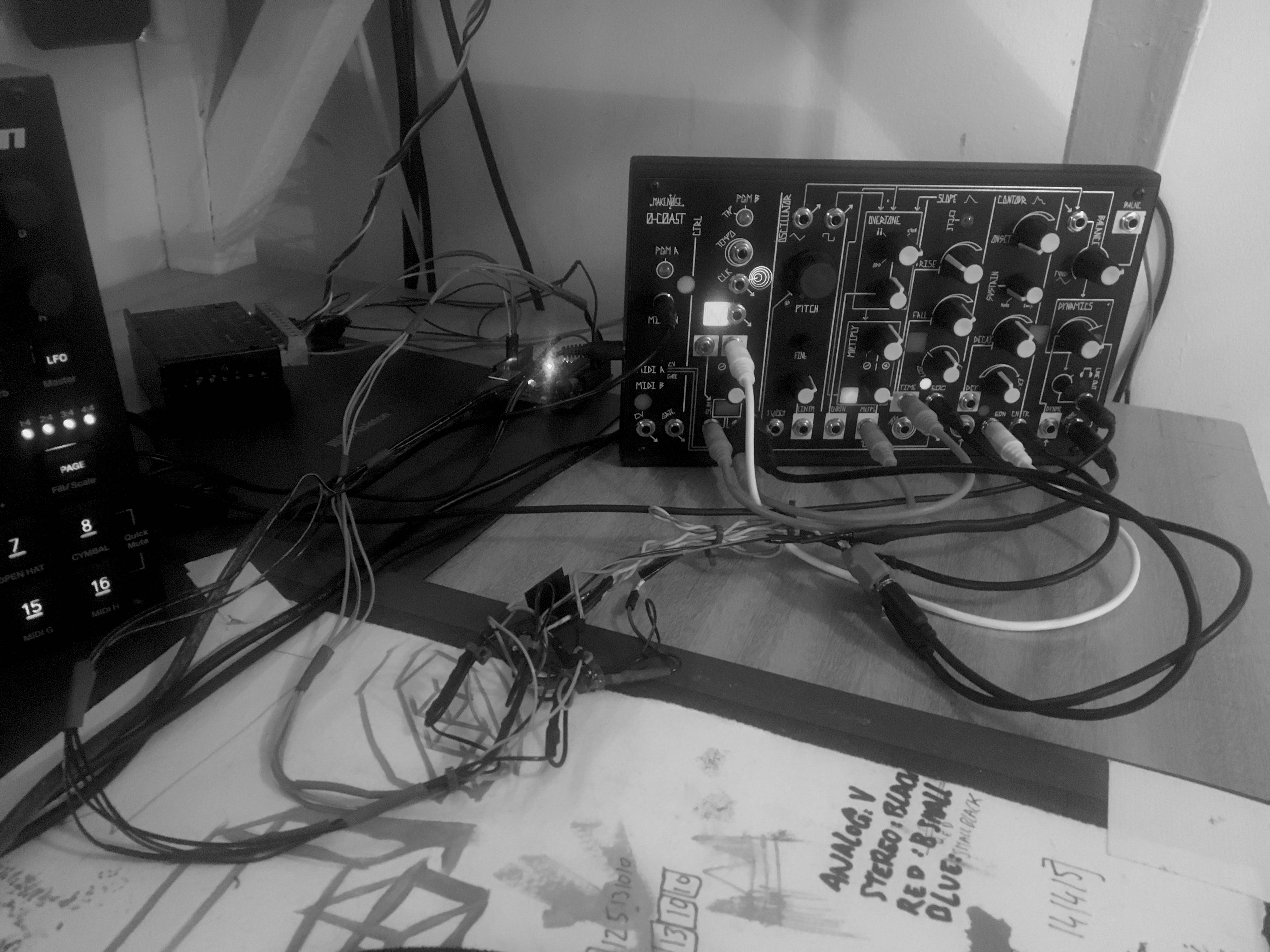

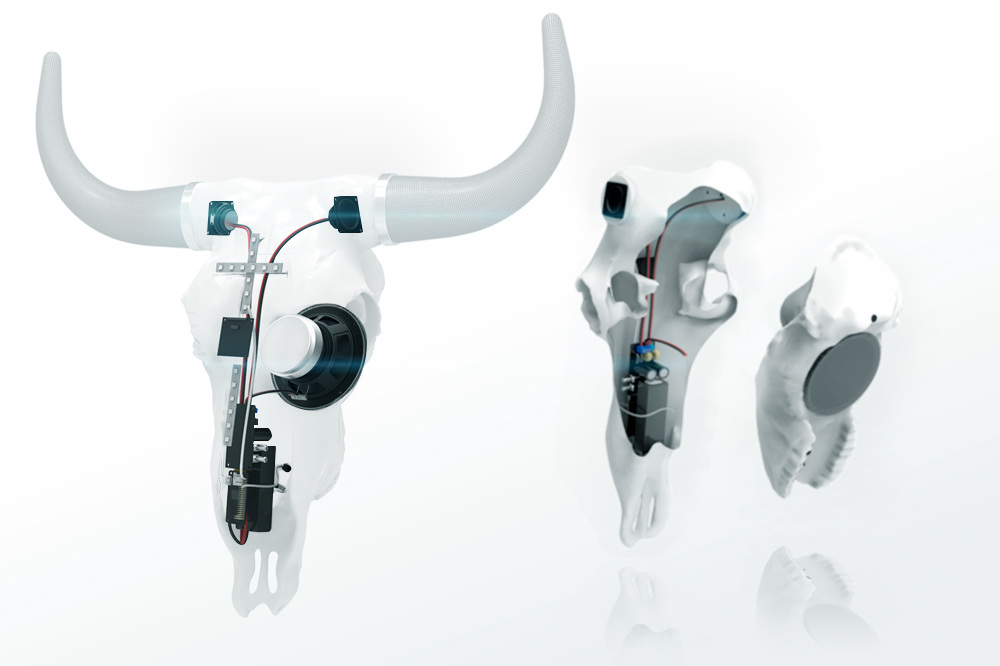

The installation is made of 3 computers running 3 different programs.

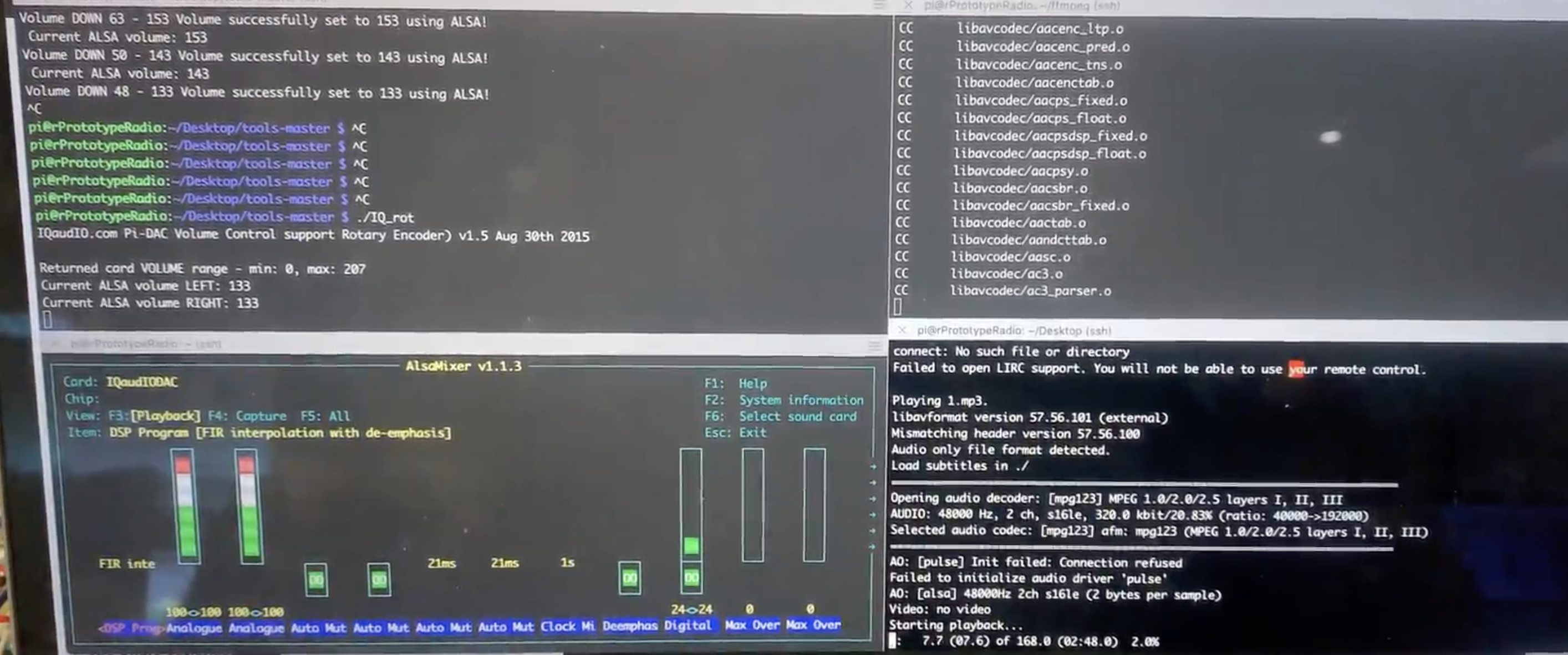

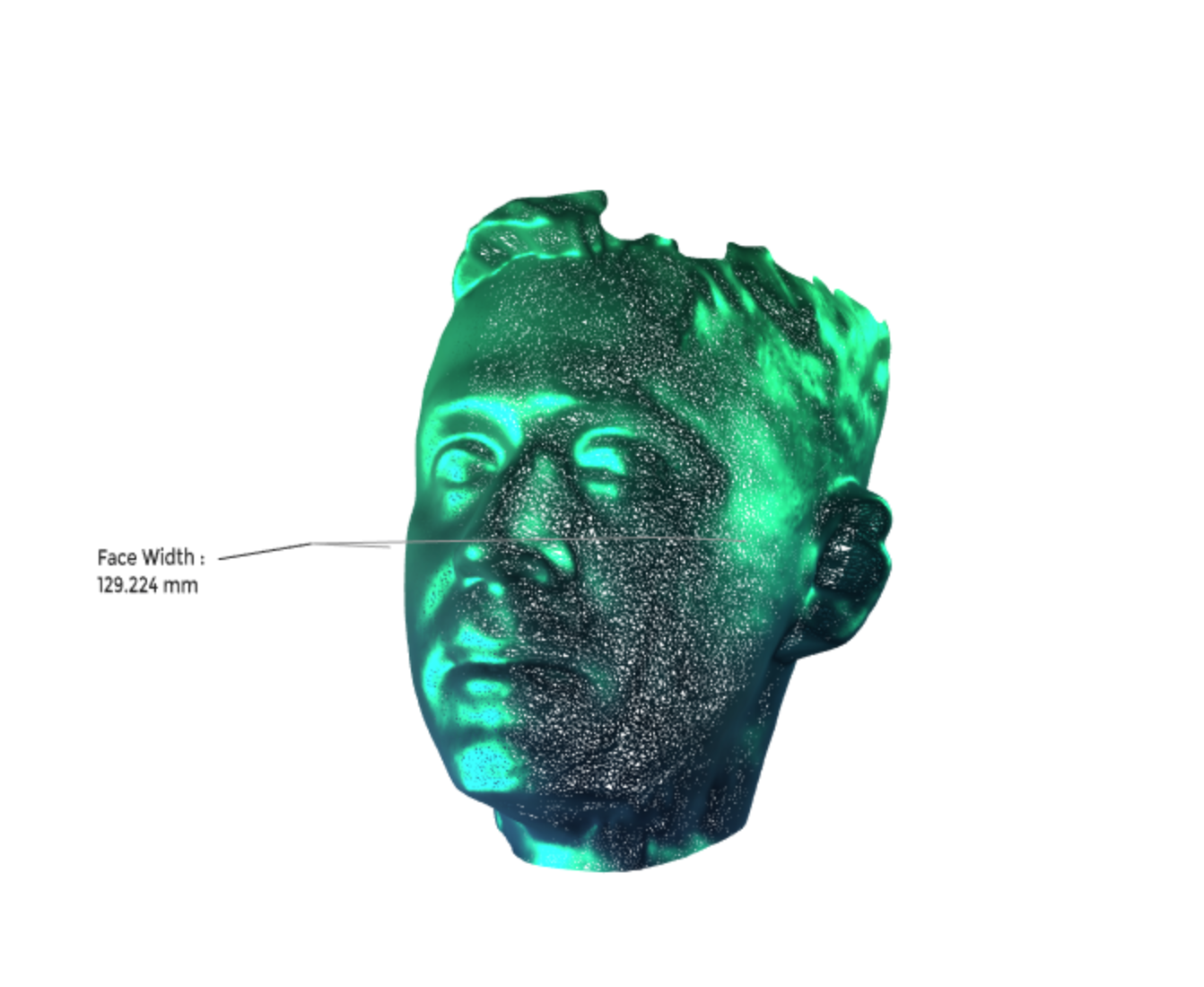

A pose capture using open-Natural Interaction 2 , in order to analyse where people are looking and howe they pose in front of the screen.

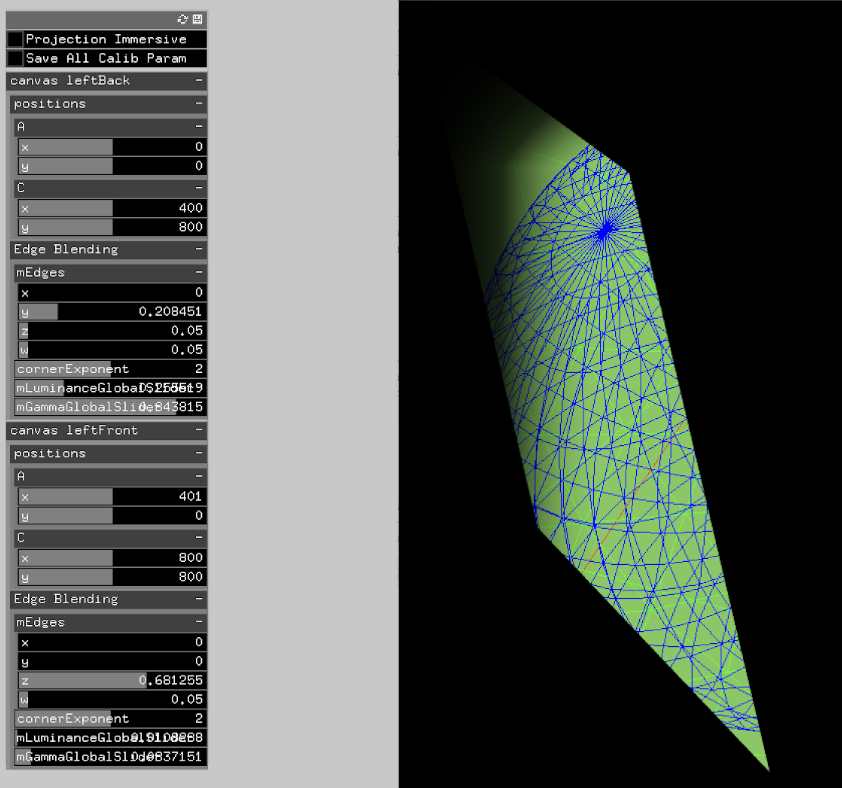

A machine learning dedicated computer, the jetson nano from Nvidia , which would use a custom made reinforcement learning model. The model would learn which images would appear most to people by analysing how long they would stay in front of the piece. It can then control what is shown on screen, by sending OSC signals to the main collage app.

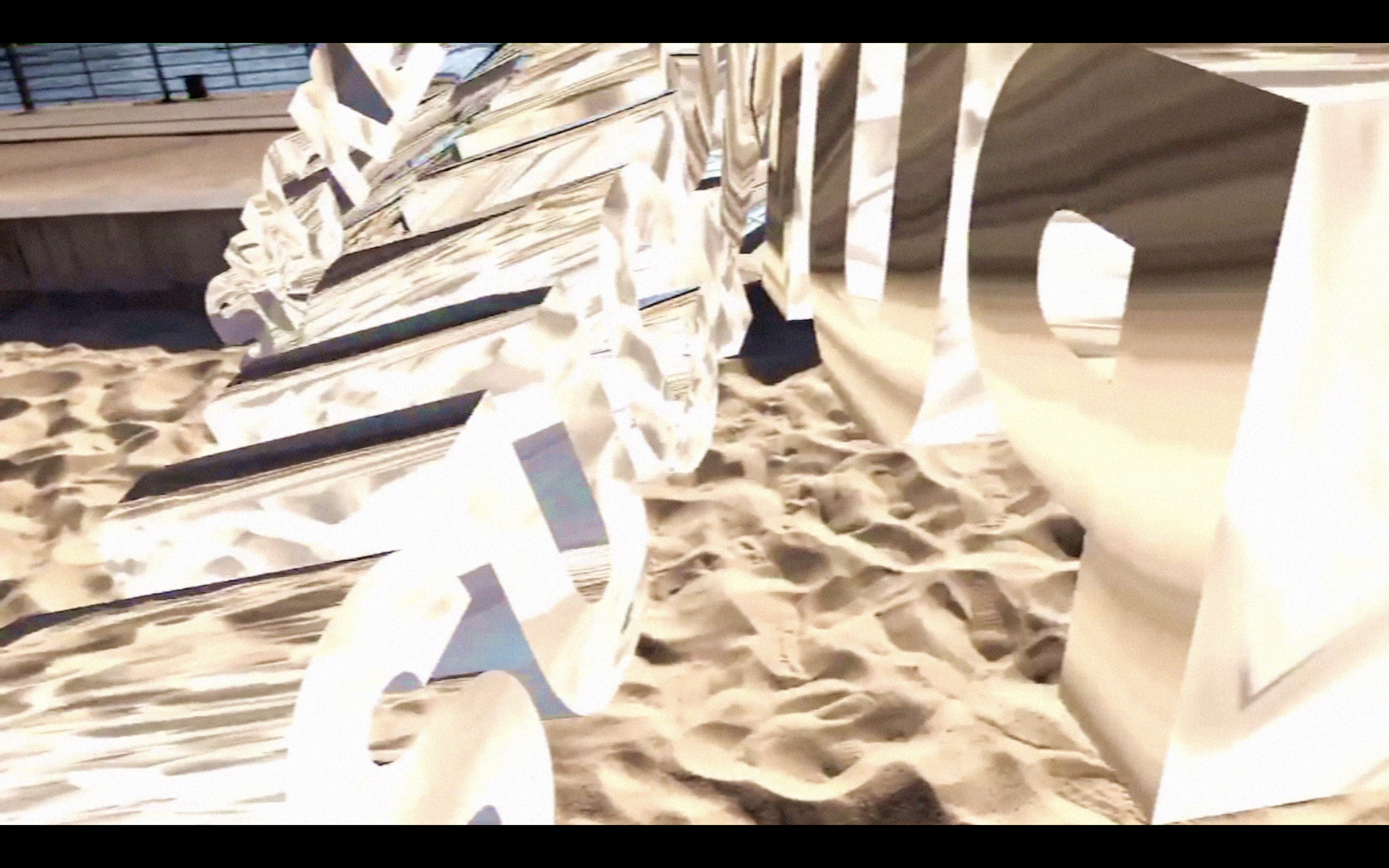

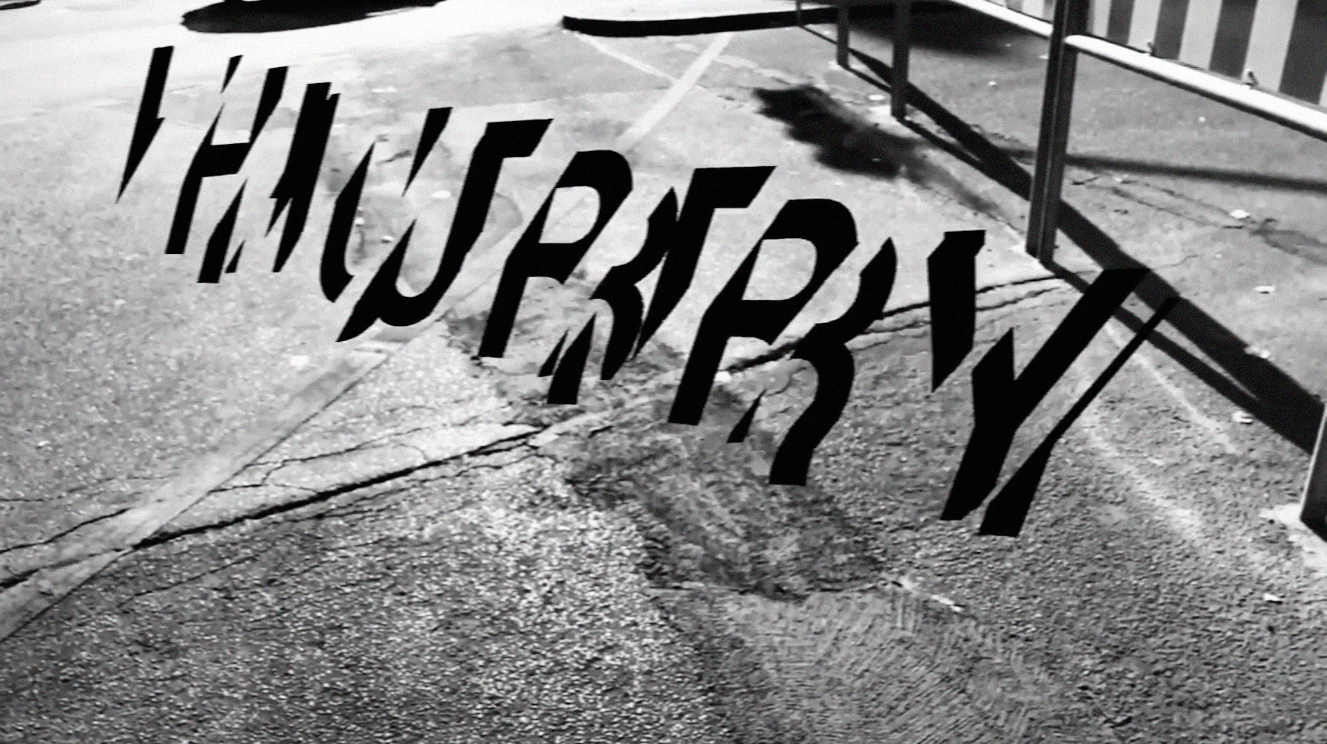

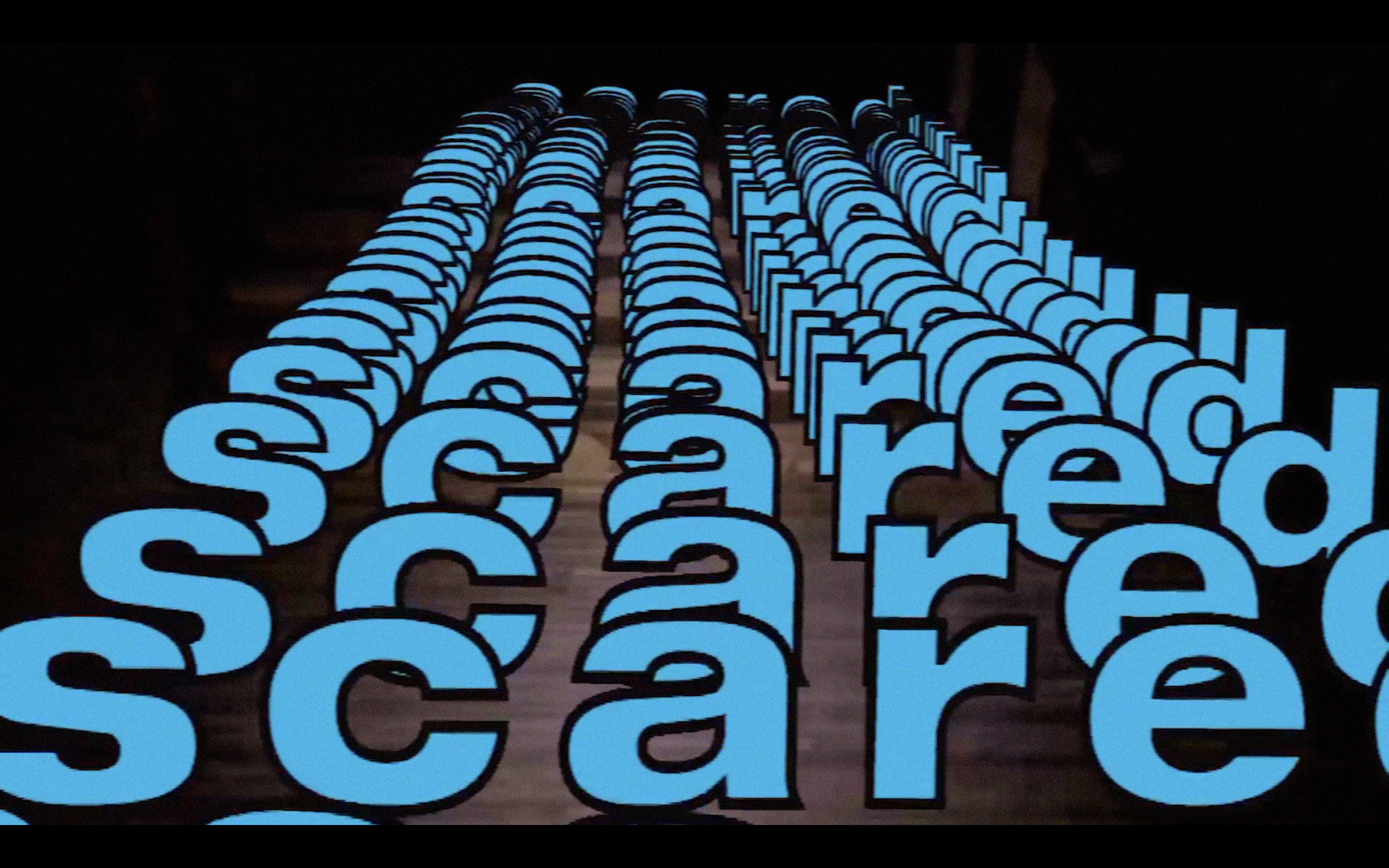

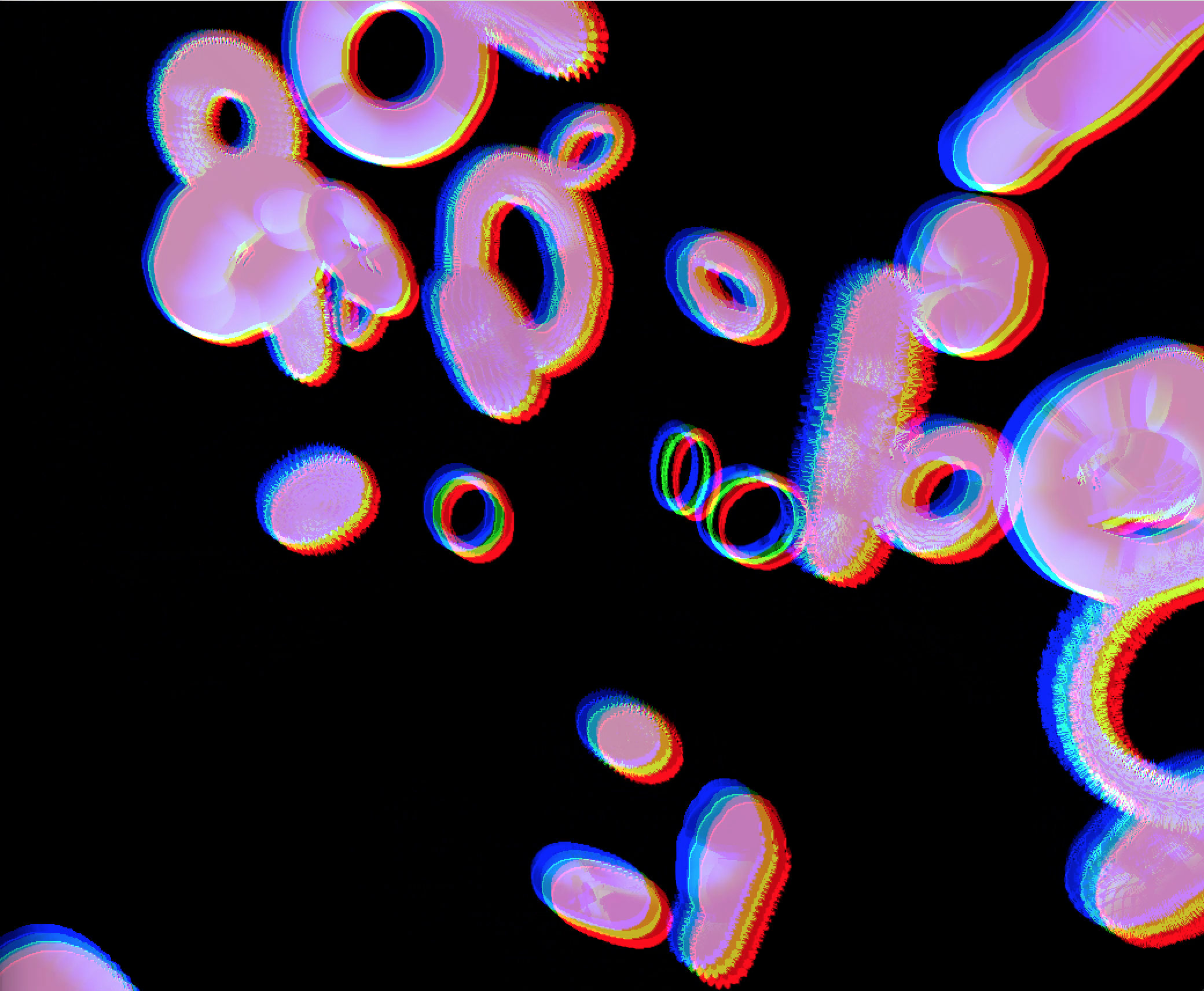

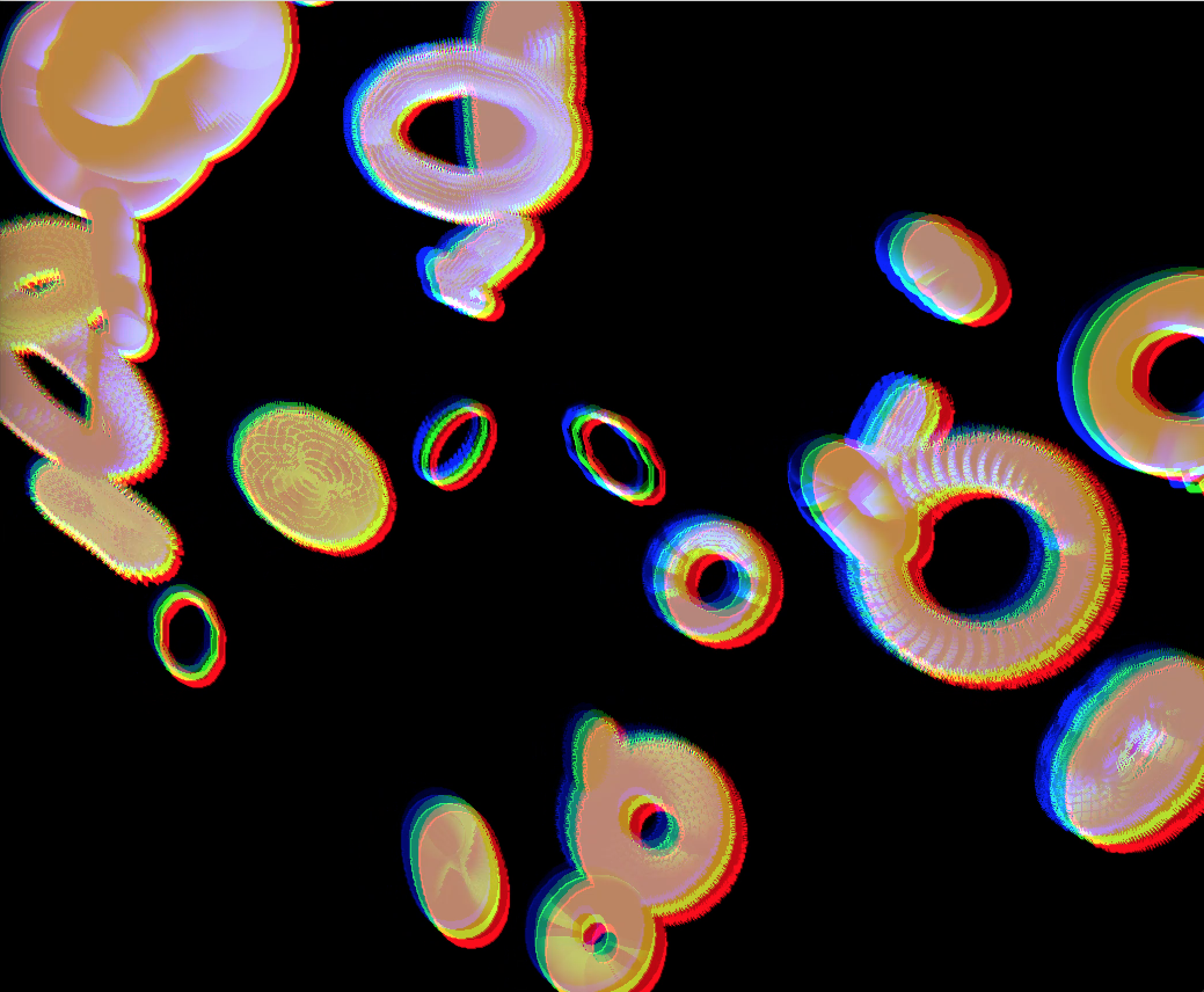

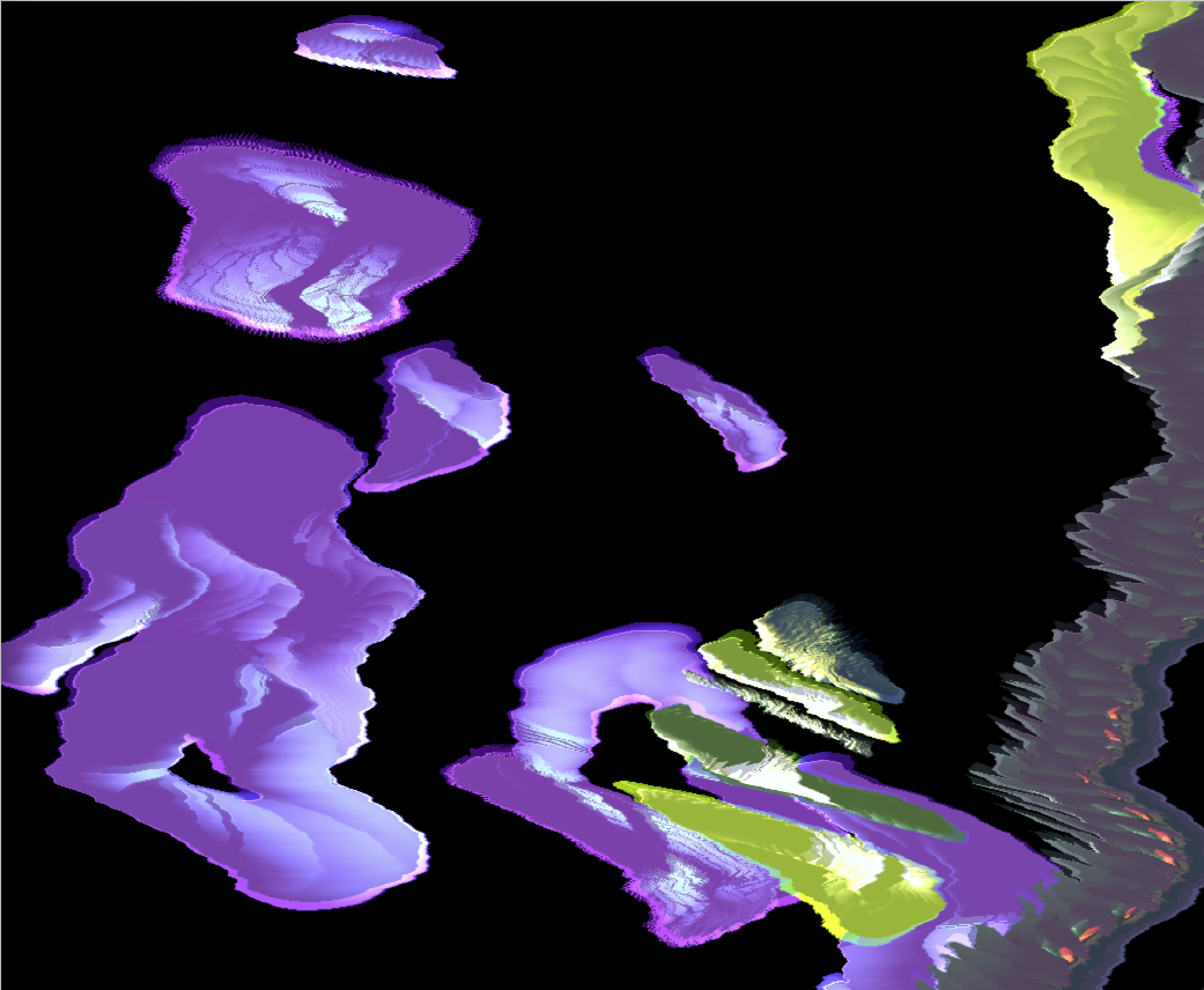

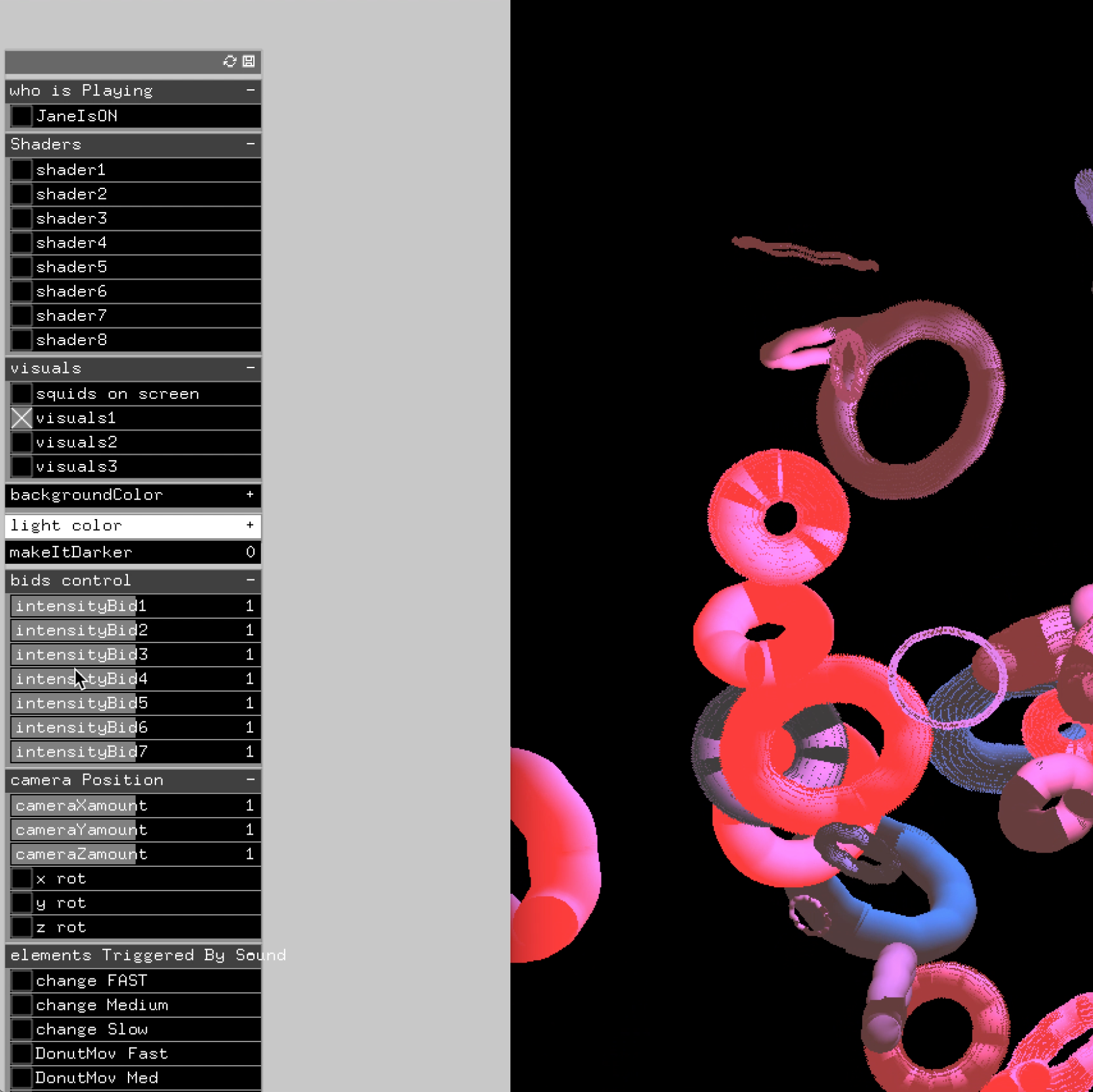

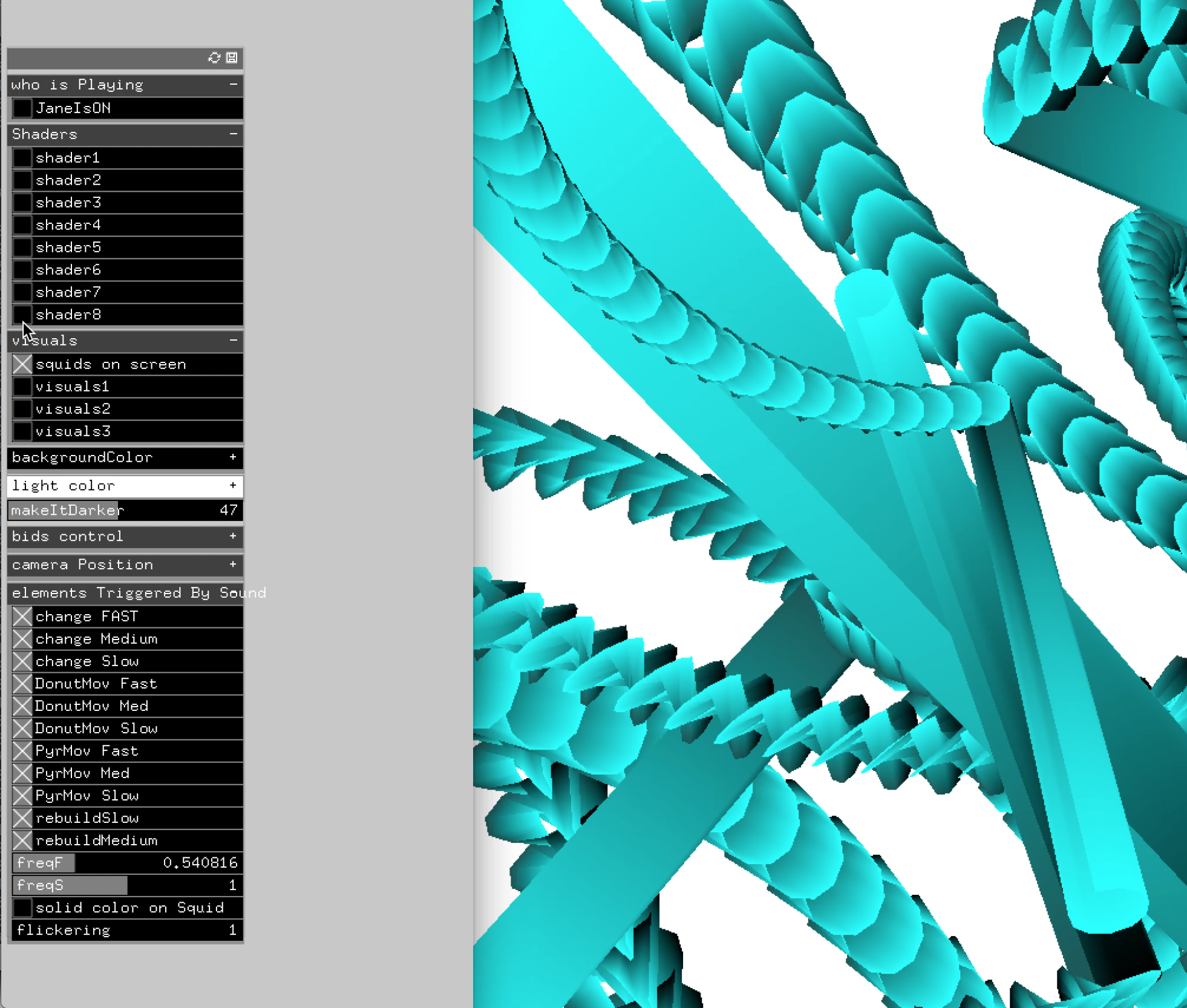

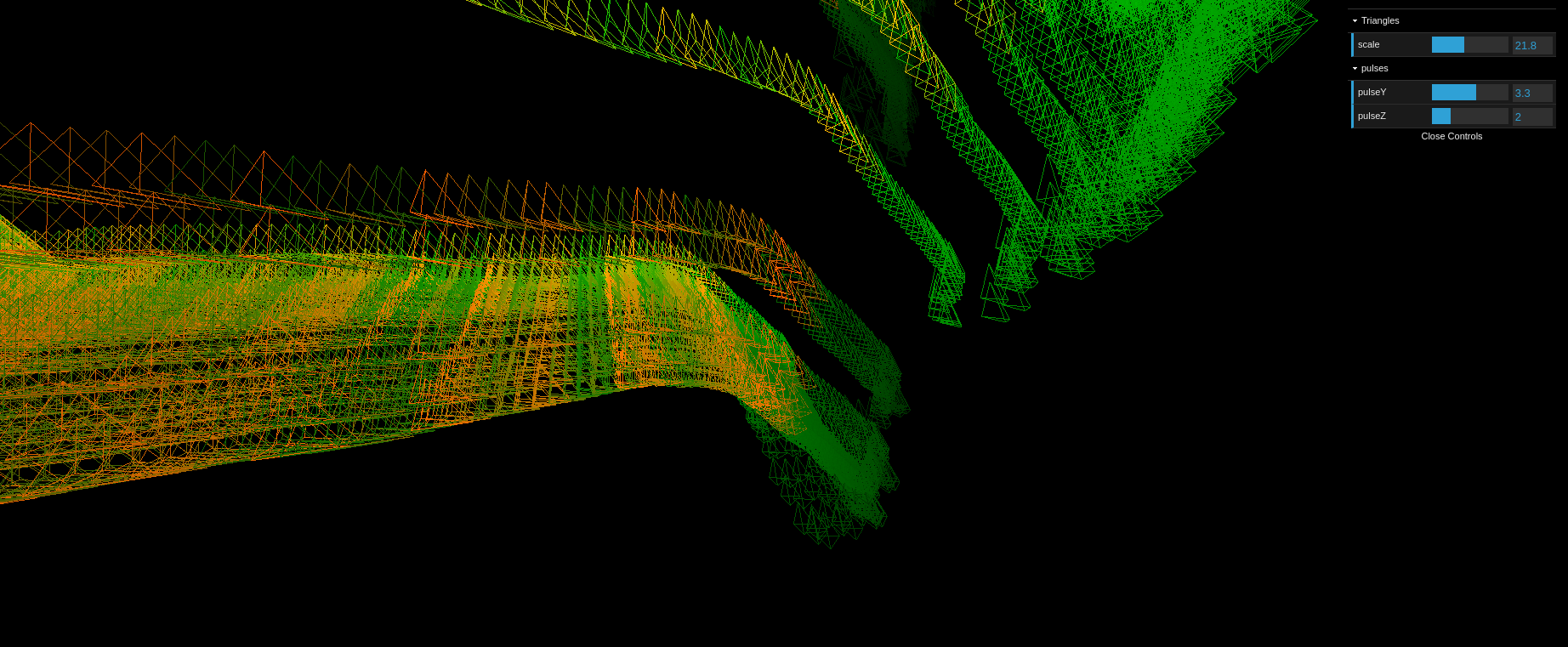

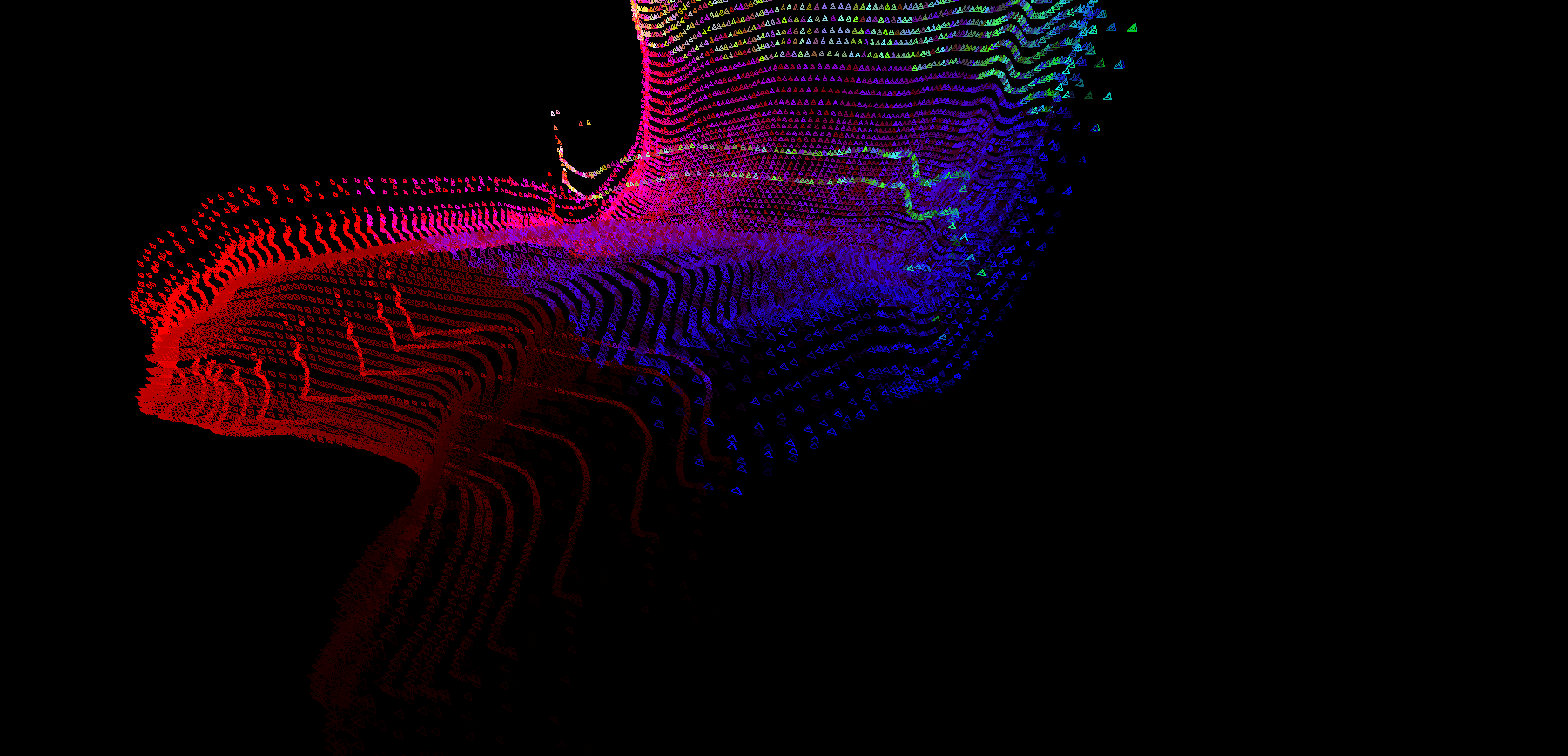

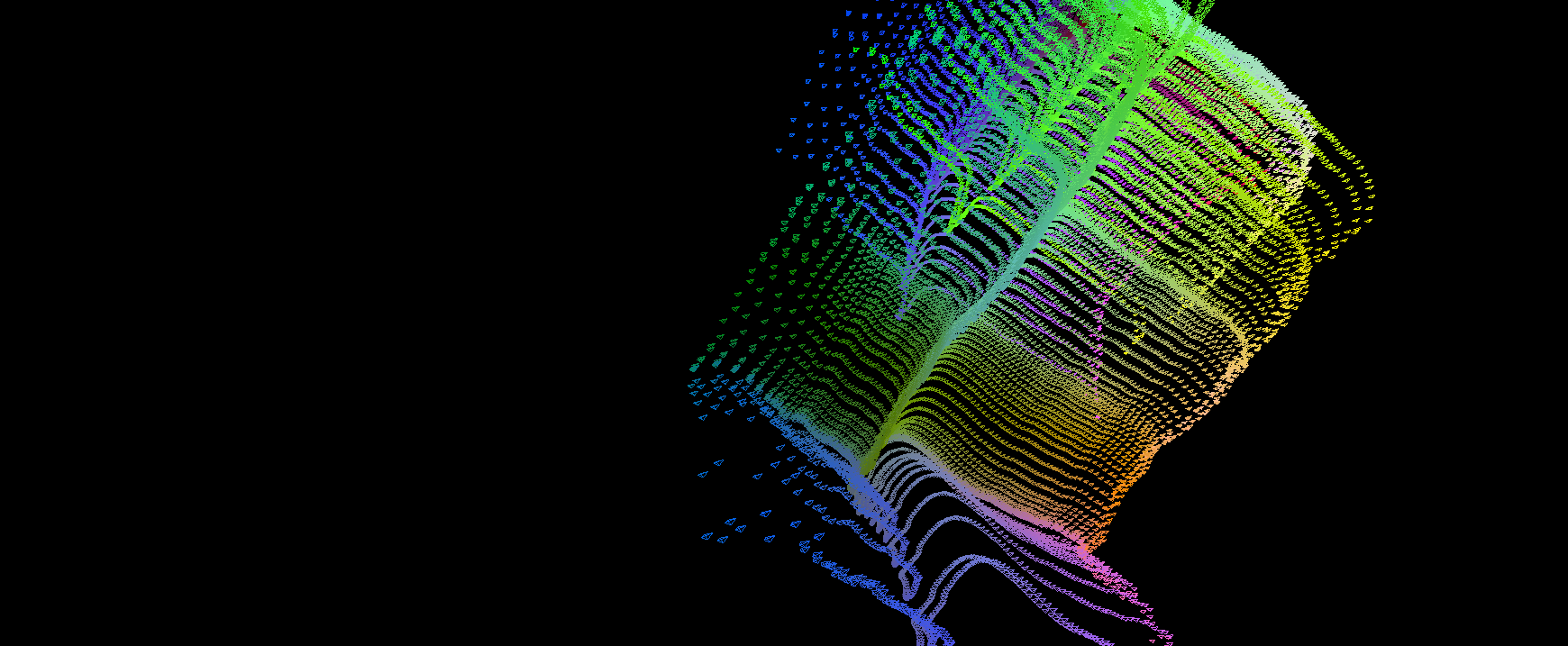

A collage app which would pull from a pool of videos and images and arrange them in an ever changing way, with different effects, and parameters of compositions. All those parameters are controlled by the machine learning model.

Masino Bay is a creative technology studio formed by Simon Oxley ( GreatCoat Film ), Gemma Yin , and me.

For this project, we were helped by Malte Lichtenberg , a PHD student in Machine Learning, who handled the core machine learning algorithms. He also helped on site, accompanied by Jayson Haebich , babysitting the installation.

Gemma handled the creative direction / Simon the production & PR.

Technology used : Openframeworks, Machine Learning, Jetson Nano, Led Screen, networking.